The Ghost of SVR4

by Lee

for India

Introduction

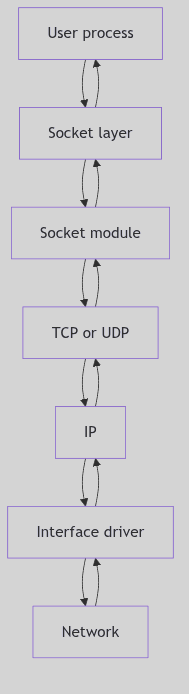

This technical guide provides comprehensive documentation of the SVR4 (System V Release 4) i386 kernel architecture and implementation. The documentation is derived from source code analysis and covers the major subsystems that comprise a complete Unix kernel.

Purpose and Scope

The SVR4 kernel represents a mature implementation of Unix system interfaces and internal architecture. This guide examines:

- Process Management: How processes are created, scheduled, signaled, and terminated

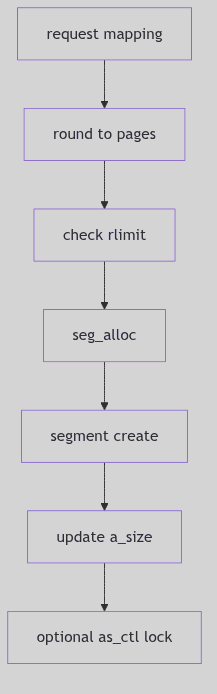

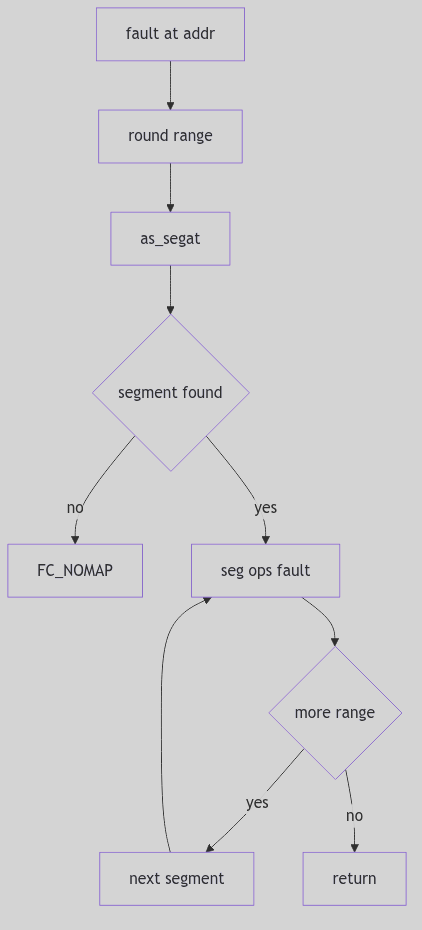

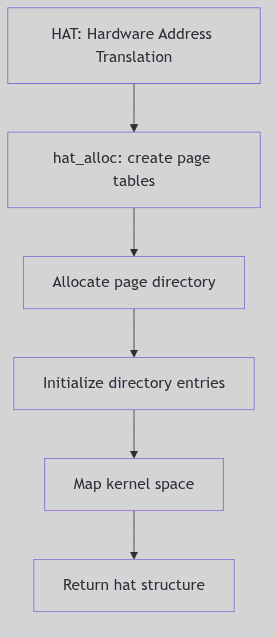

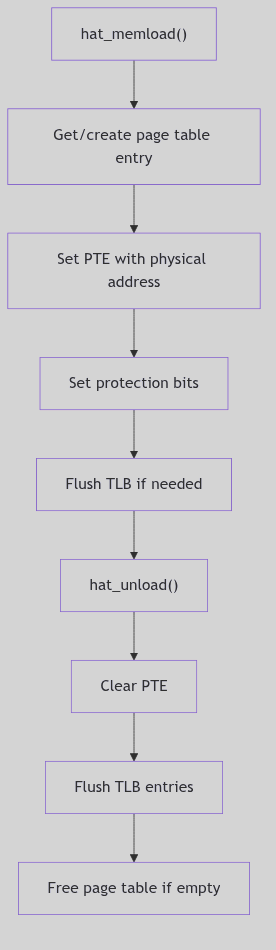

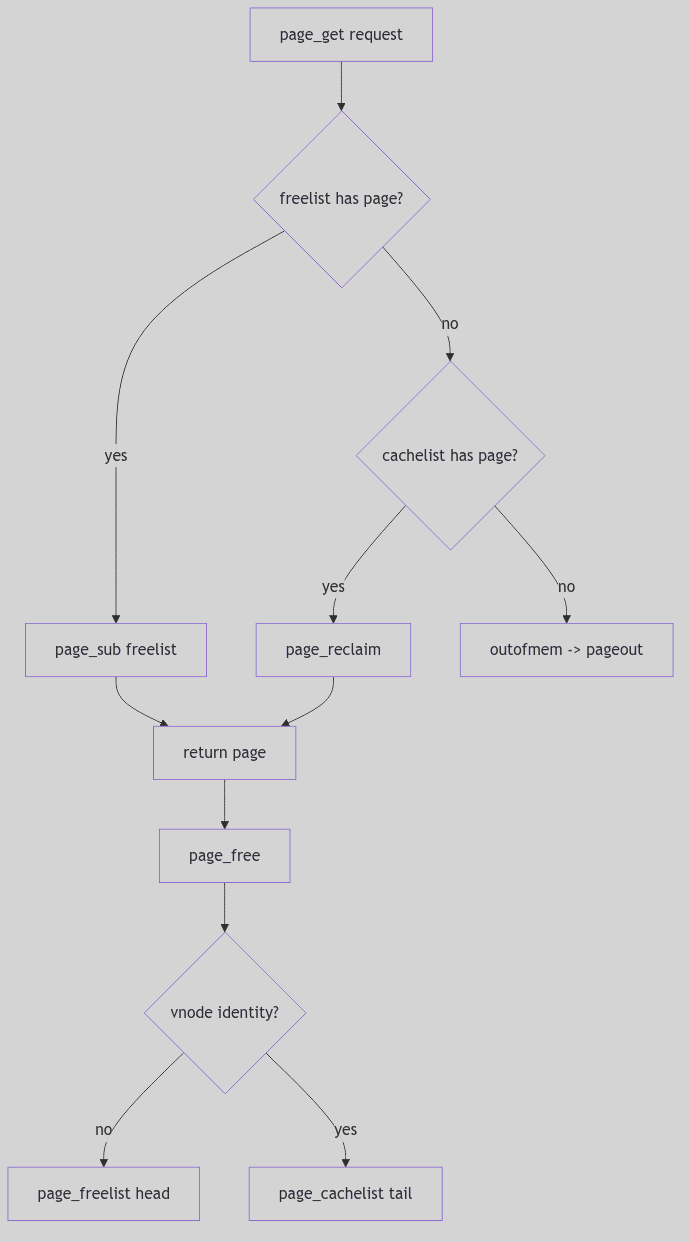

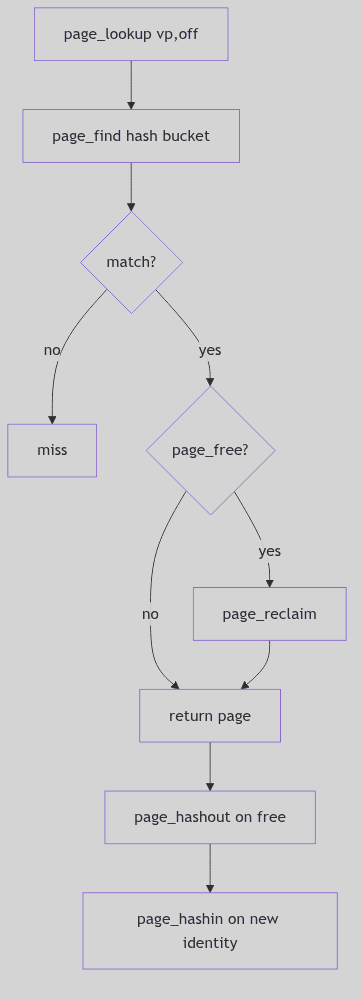

- Memory Management: Virtual memory implementation, paging, and memory allocation strategies

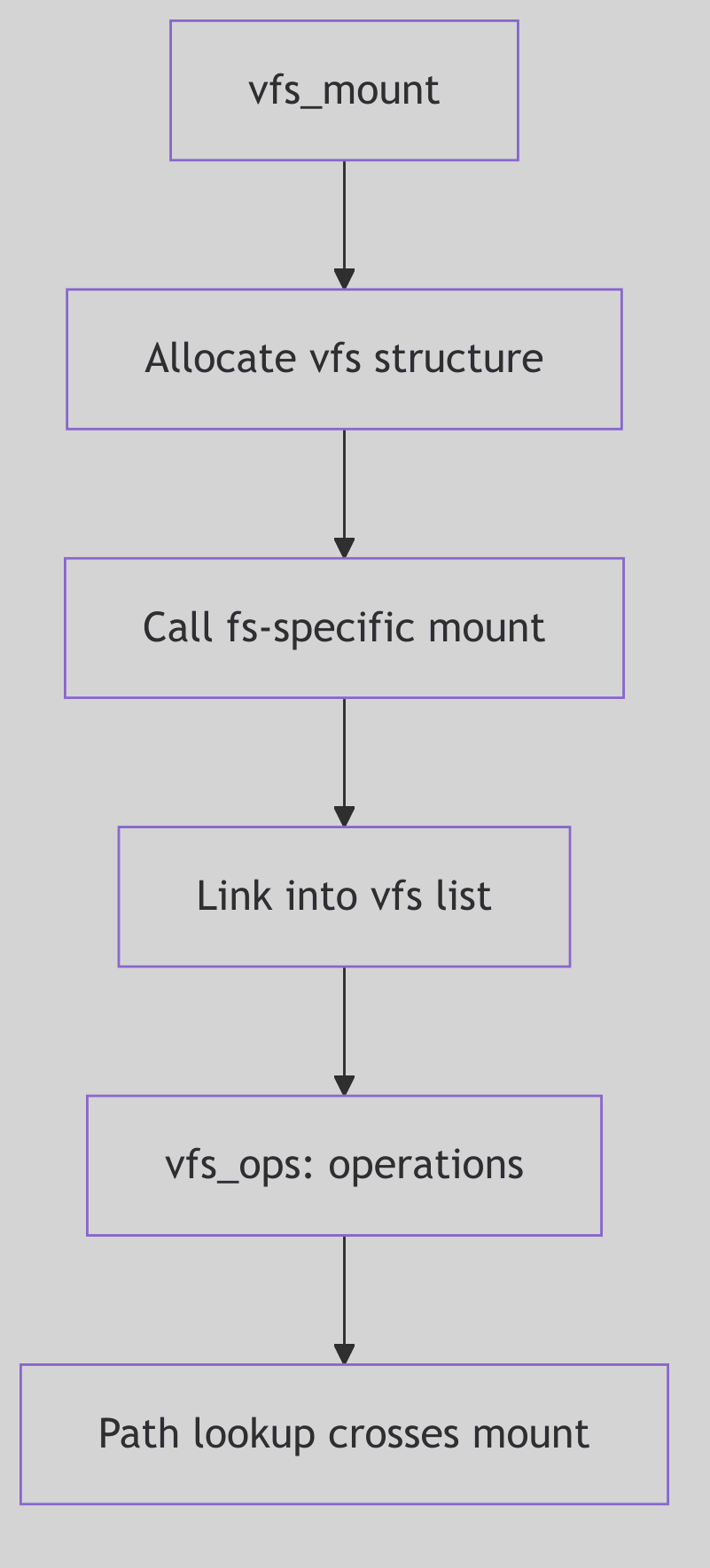

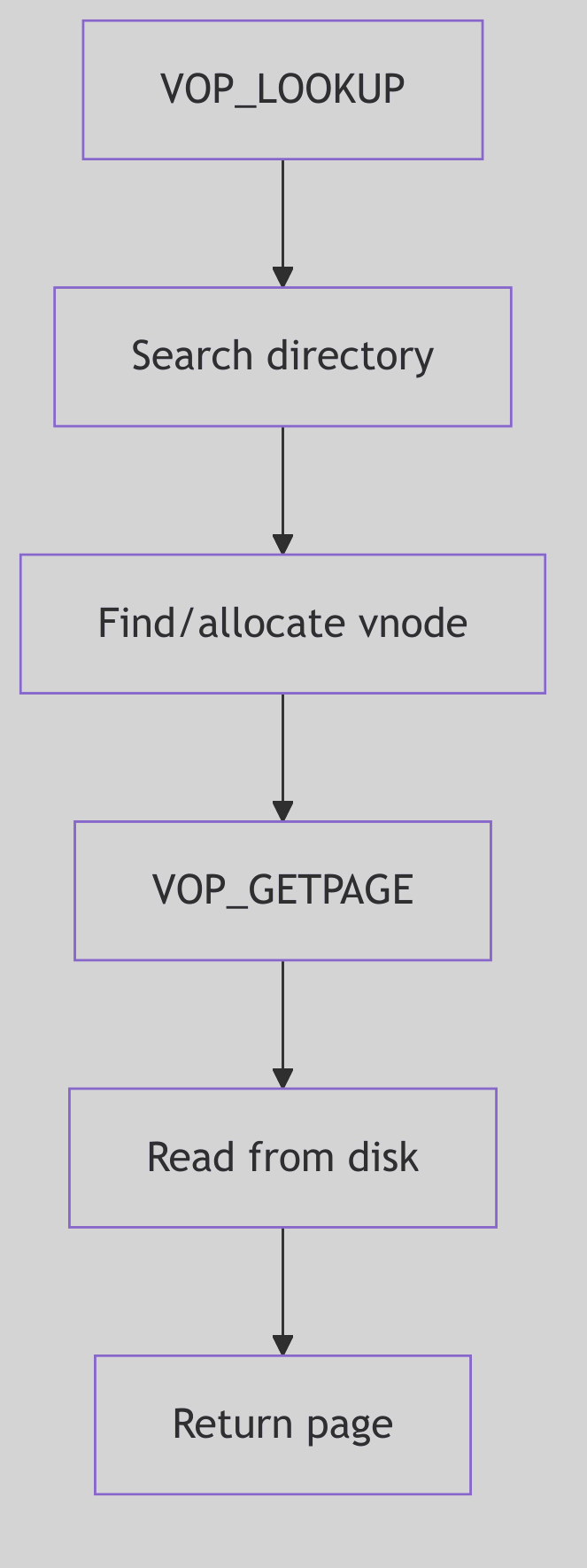

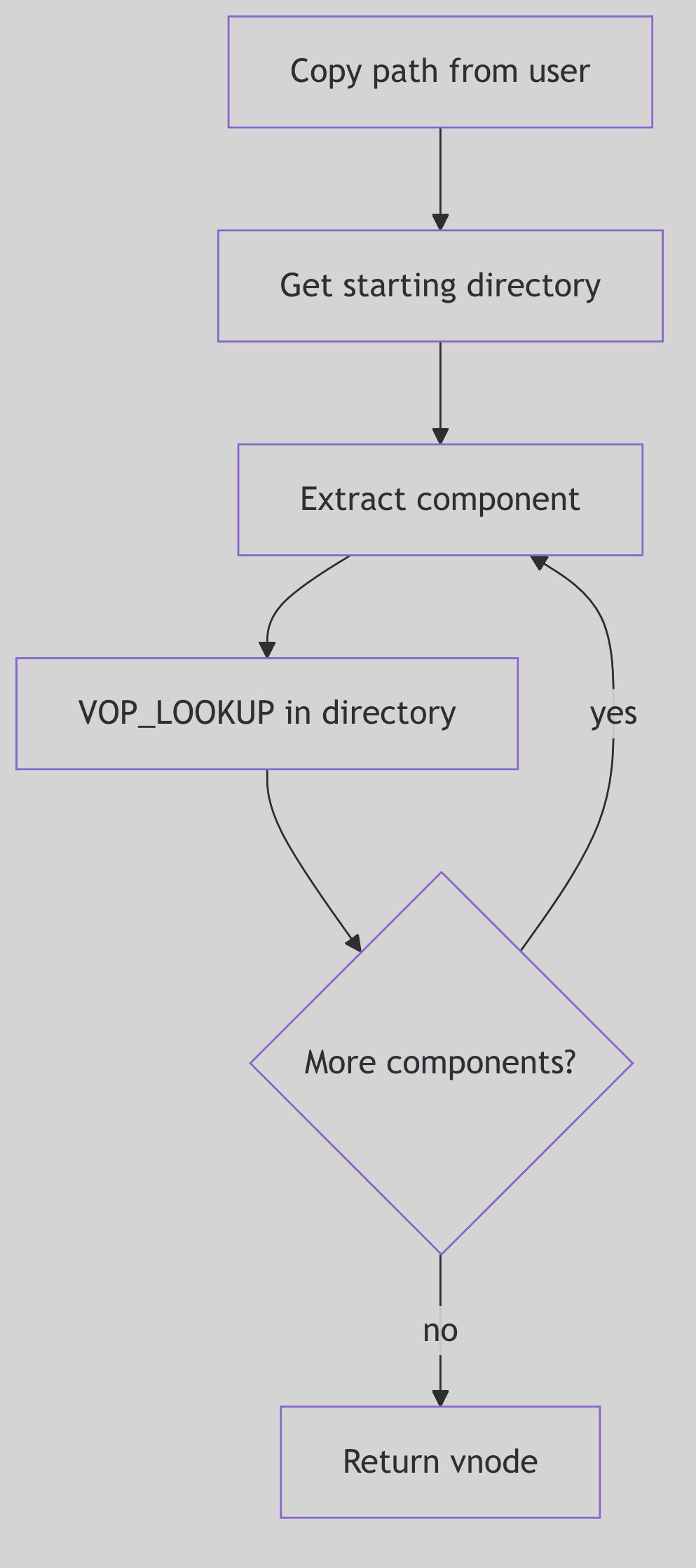

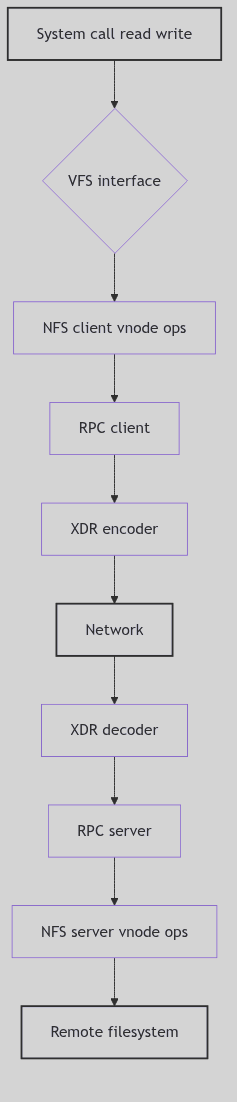

- File Systems: The Virtual File System layer and various filesystem implementations

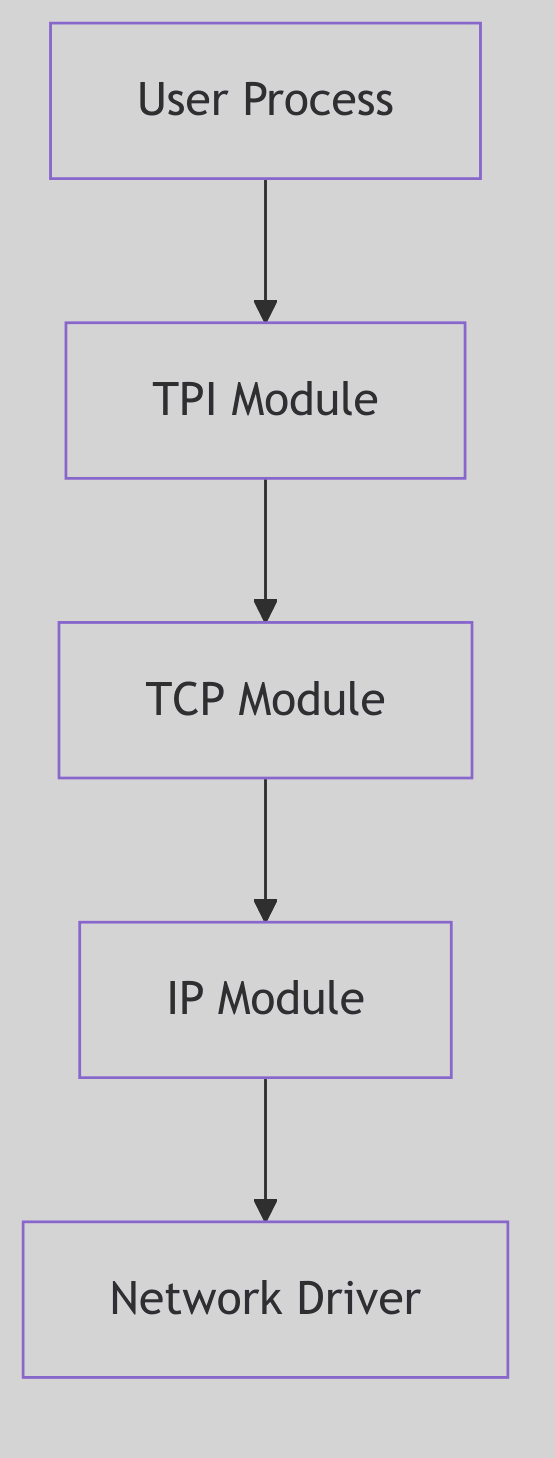

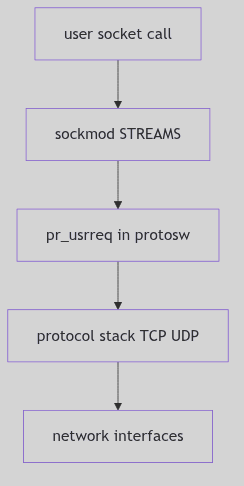

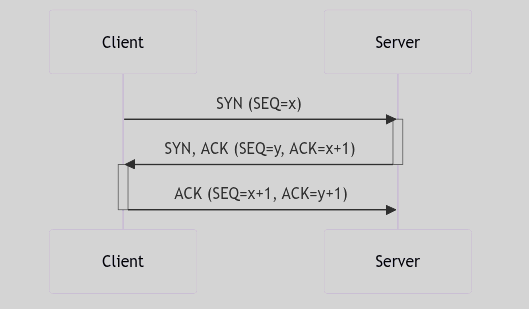

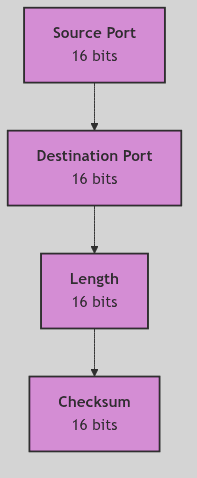

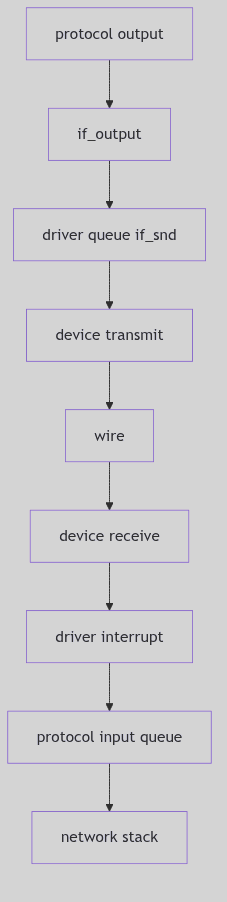

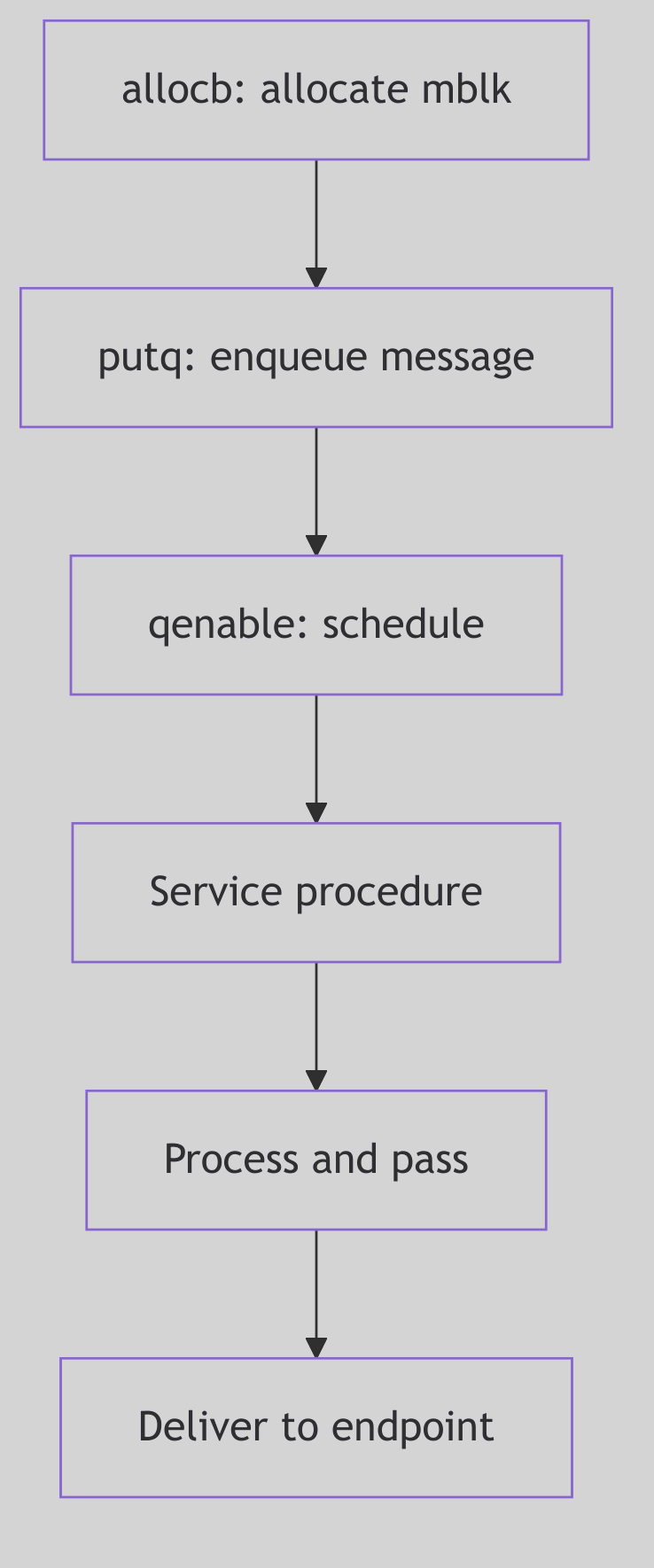

- Networking: Network stack architecture including TCP/IP and NFS

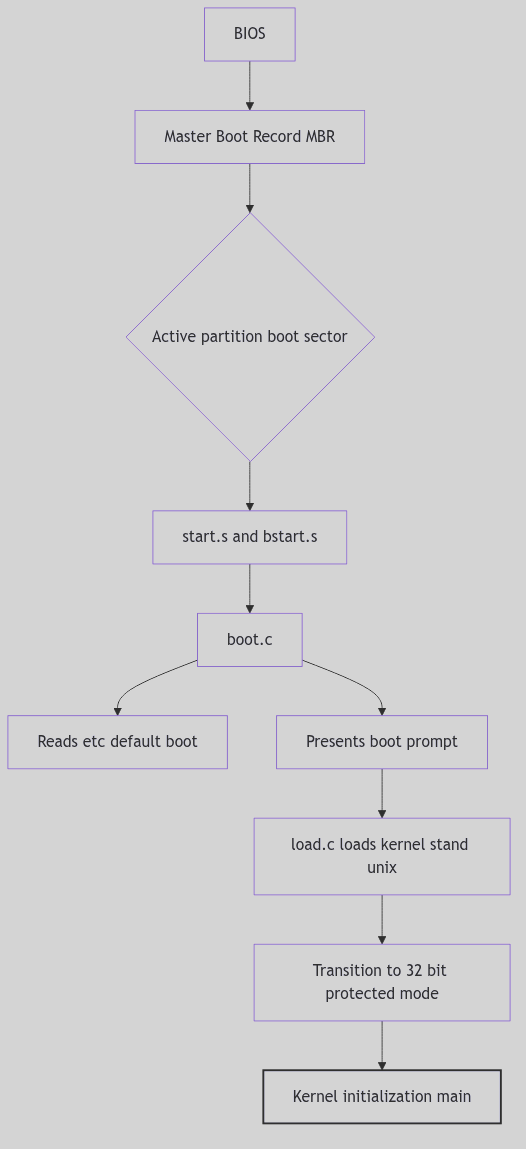

- I/O and Device Management: Device drivers, interrupt handling, and boot process

Documentation Methodology

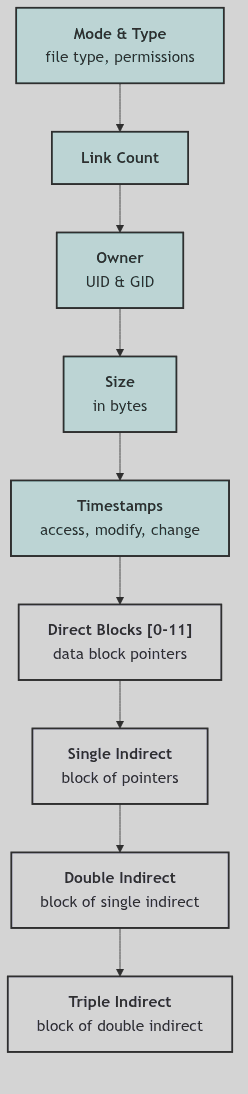

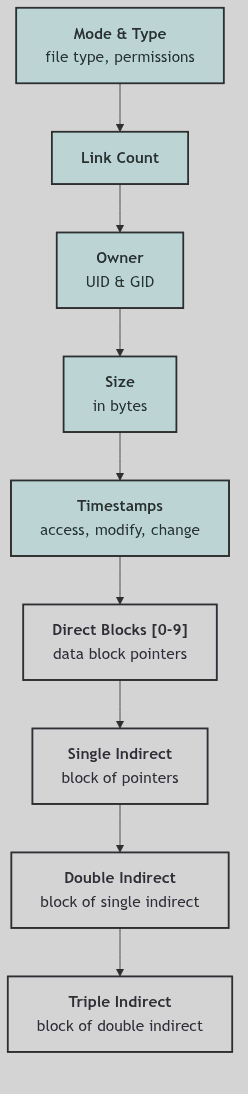

Each subsection follows a consistent structure:

- Technical Summary: A detailed explanation of the subsystem’s architecture and operation

- Code Analysis: Key code snippets showing critical implementation details

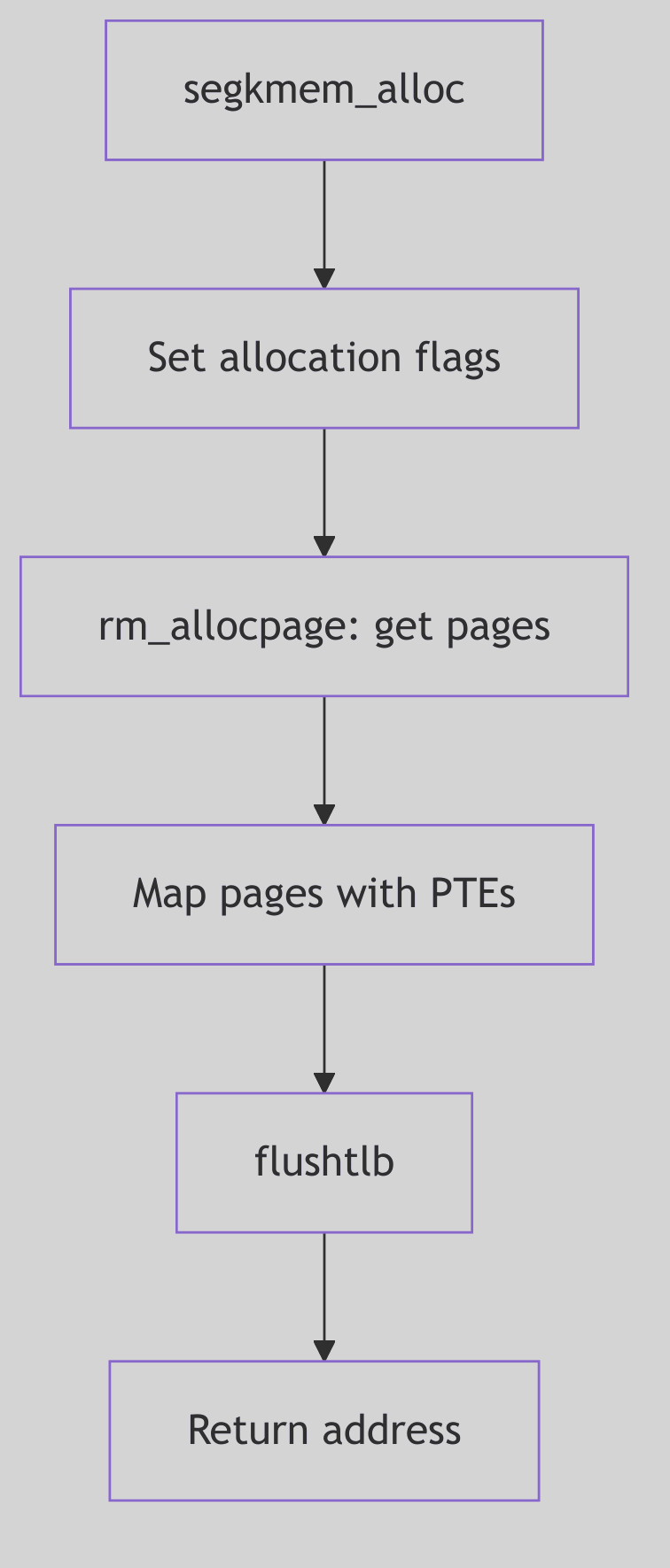

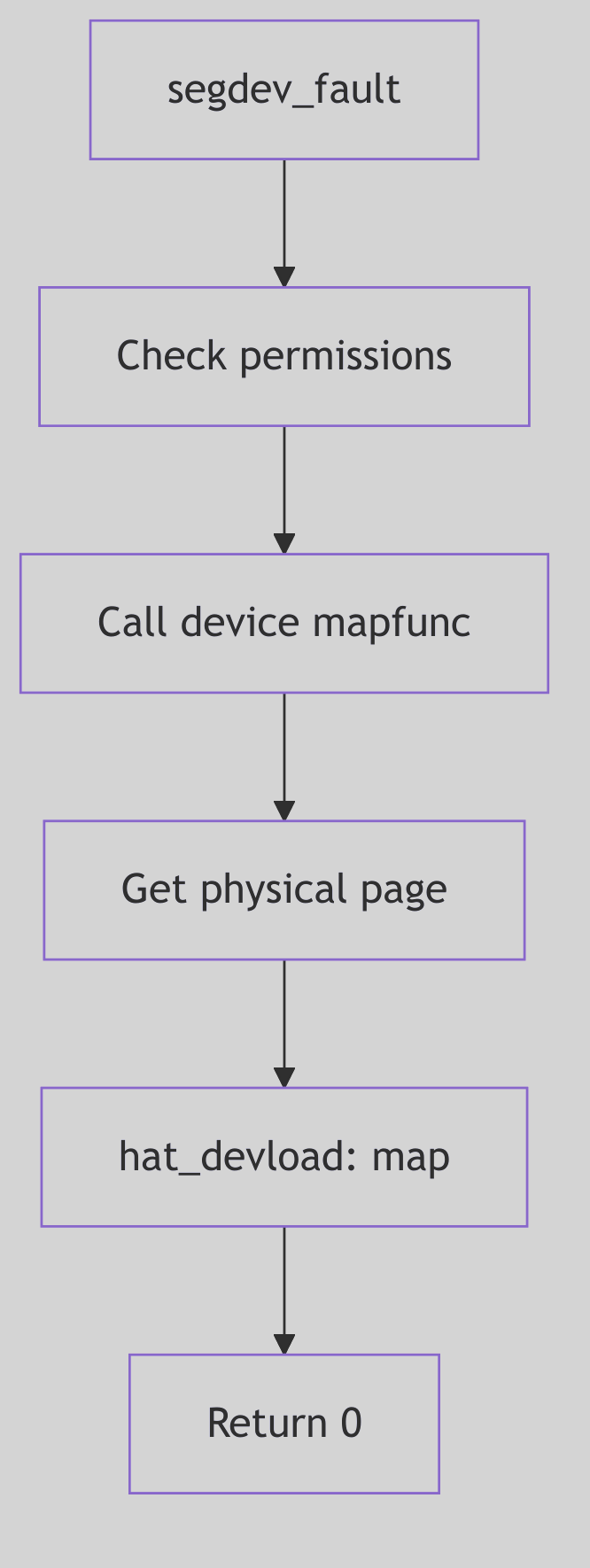

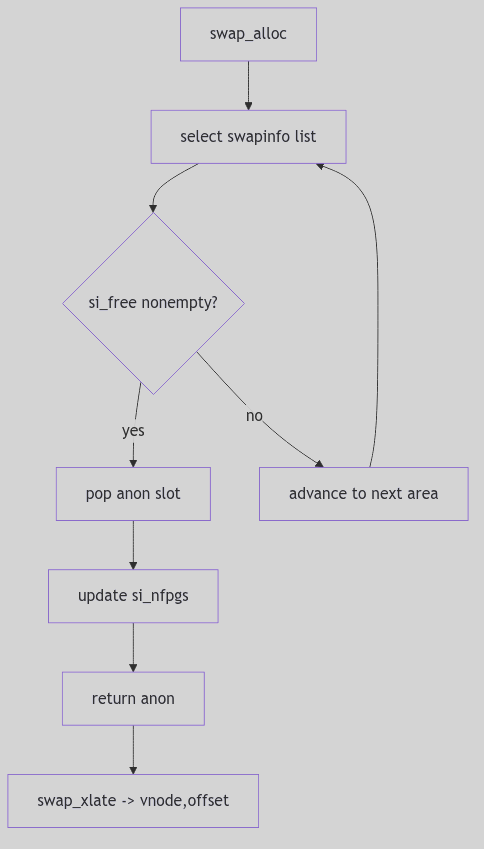

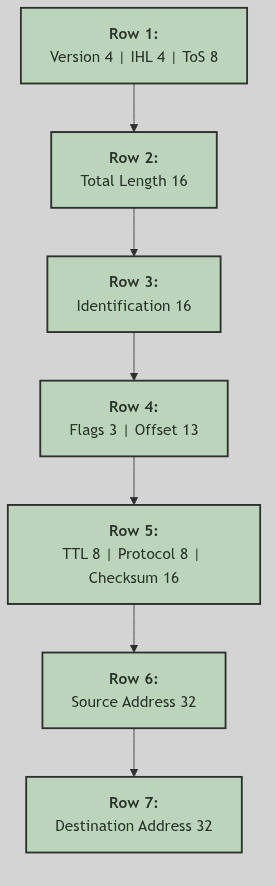

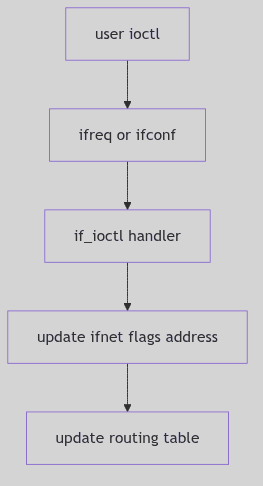

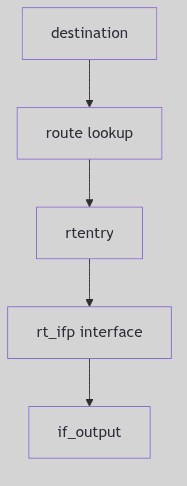

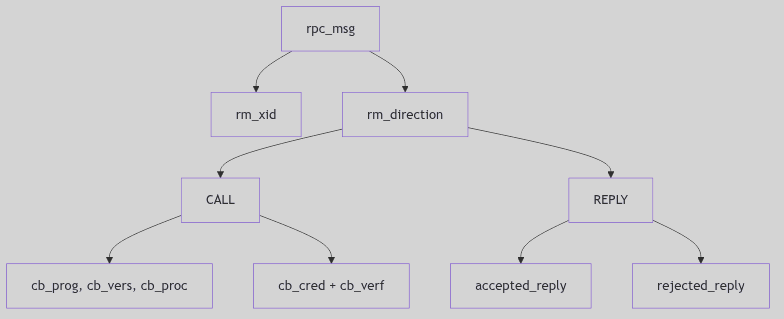

- Diagrams: Visual representations of state transitions, data structures, and flow control

The focus is on core logic and significant design decisions, excluding boilerplate and macro definitions that obscure understanding.

Source Code

The kernel source code analyzed is located in the svr4-src/uts/i386/ directory, with primary subsystems in:

os/- Core operating system functionsmem/- Memory managementfs/- File system implementationsnet/- Networking protocolsio/- Device drivers and I/O subsystem

Process Lifecycle

The Genesis and Demise of a Process: A Kernel’s Grand Orchestration

The SVR4 i386 kernel, a venerable maestro of multitasking, conducts a perpetual symphony of processes. Each process, from its first breath to its final sigh, embarks on an intricate dance with the kernel’s inner workings. To truly grasp the essence of SVR4—its elegant resource management, its disciplined isolation of execution, and its masterful orchestration of concurrent tasks—one must first become intimately acquainted with this grand lifecycle. We peel back the layers, not merely observing, but feeling the silicon pulse beneath the C logic.

The Spark of Life: Process Creation

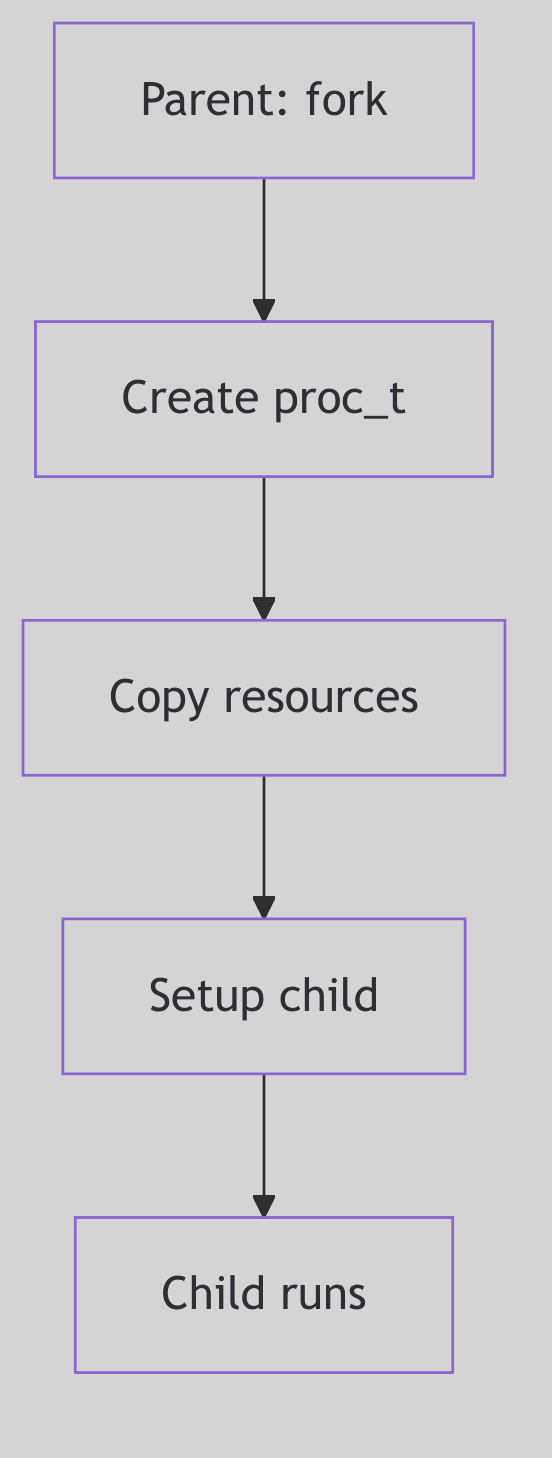

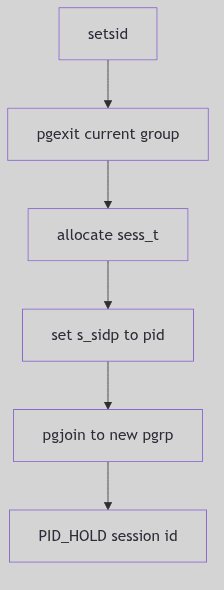

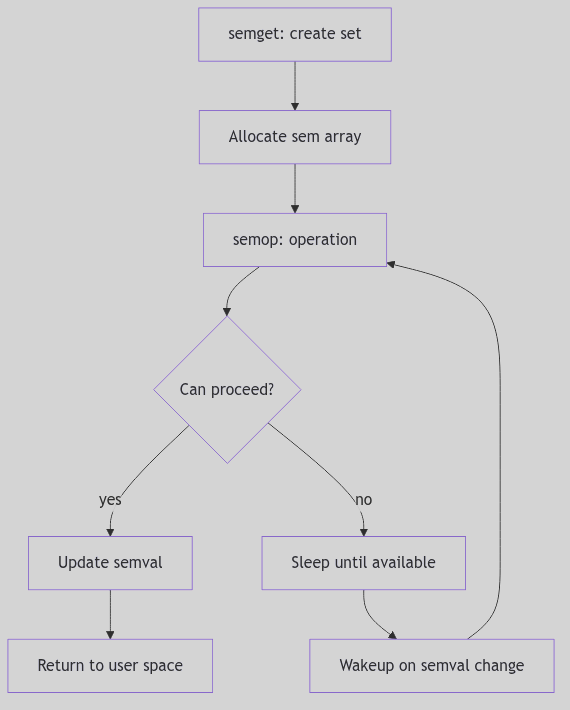

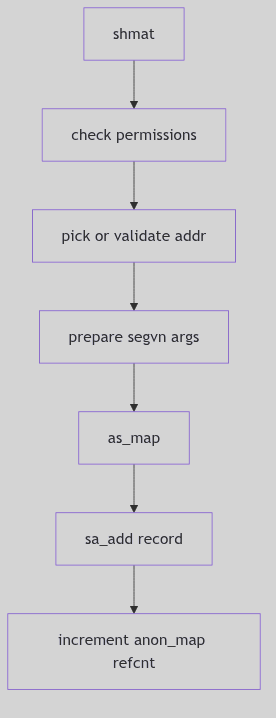

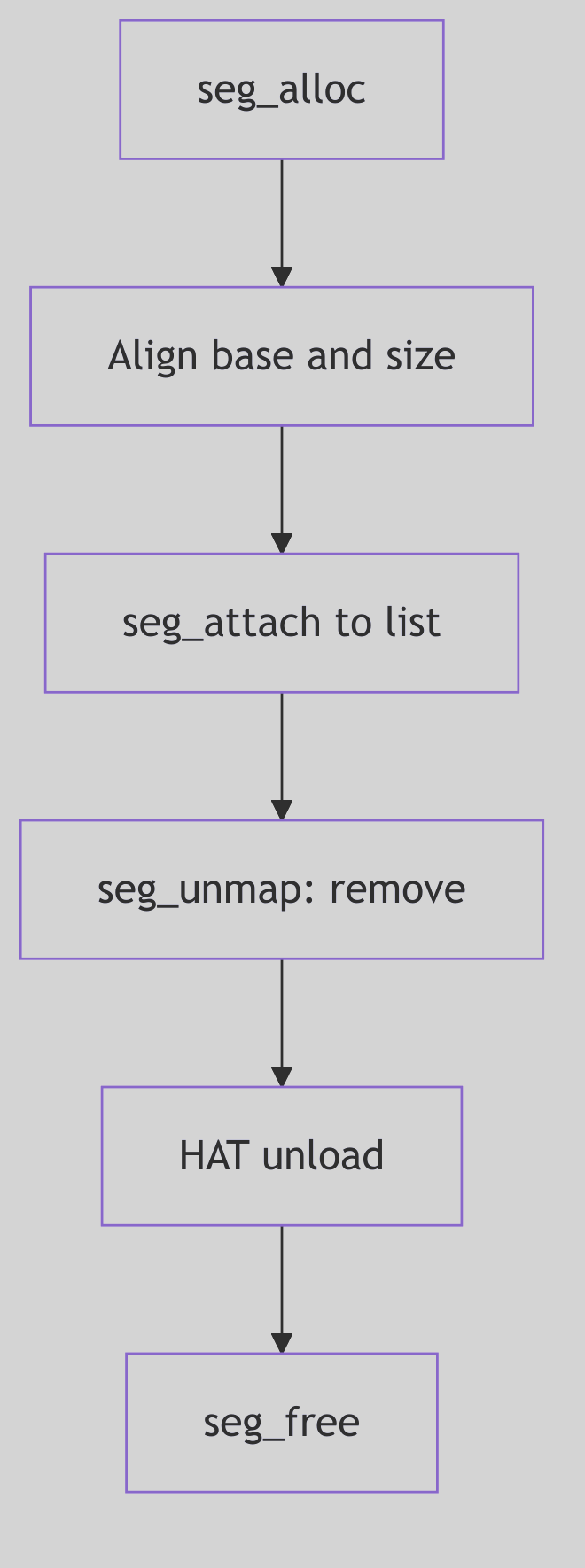

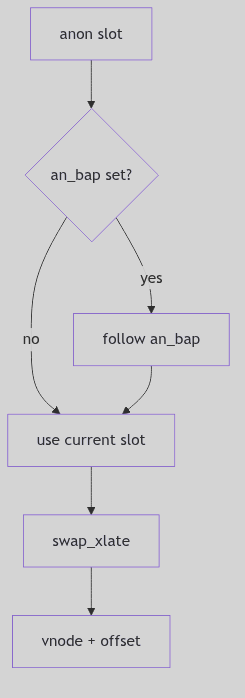

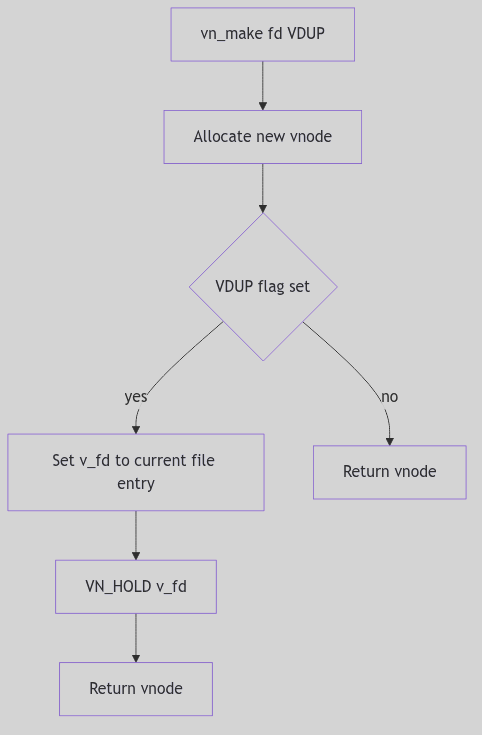

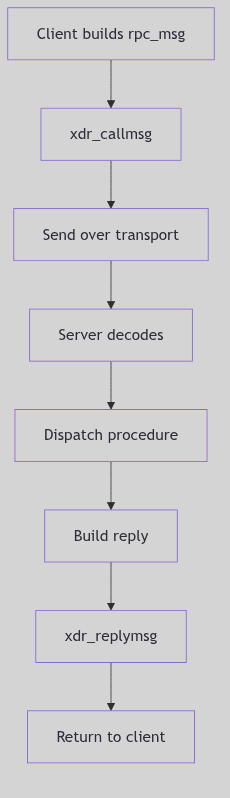

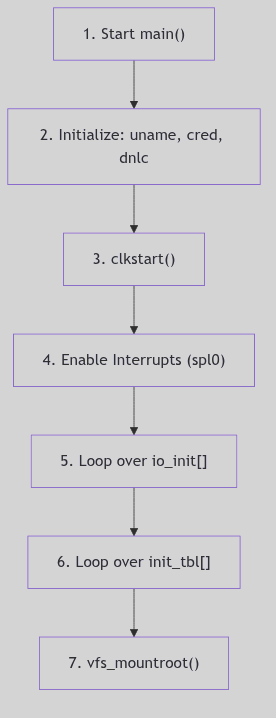

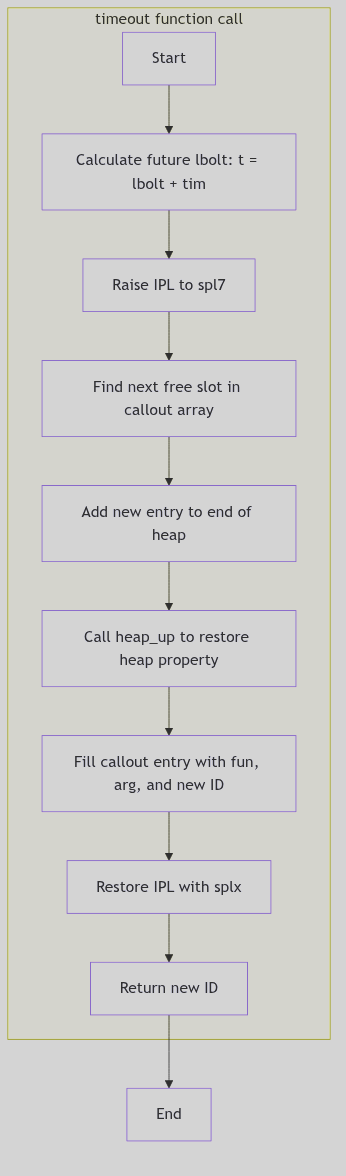

Figure 1.1.1: Fork Process Creation

Figure 1.1.1: Fork Process Creation

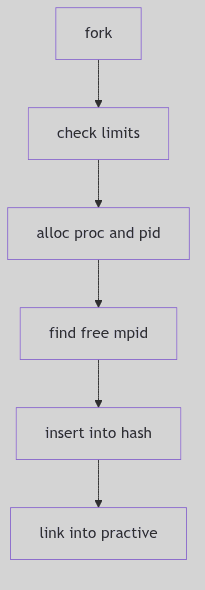

In the dominion of SVR4, a new process doesn’t simply appear; it is born. The primal act of creation is embodied by the fork() system call—a moment of digital fission where one process, the stoic parent, begets another, the eager child. Imagine, if you will, the kernel as a meticulous scribe, duplicating the parent’s entire universe: its memory segments, its open portals to the filesystem (file descriptors), and even the fleeting thoughts held within its CPU registers.

Upon this miraculous birth, a subtle yet profound distinction emerges. The fork() call, like an oracle, whispers the child’s unique Process ID (PID) to the parent, while to the child, it merely bestows a humble 0, a symbol of its nascent identity.

// A classic fork() scenario in SVR4

#include <unistd.h>

#include <stdio.h>

#include <sys/types.h> // For pid_t

int main() {

pid_t pid;

printf("Before fork, PID is %d\n", getpid()); // Line 6

pid = fork(); // Line 8

if (pid < 0) {

perror("fork failed");

return 1;

} else if (pid == 0) {

// Child process

printf("I am the child! My PID is %d, my parent's PID is %d\n", getpid(), getppid());

} else {

// Parent process

printf("I am the parent! My PID is %d, my child's PID is %d\n", getpid(), pid);

}

return 0;

}

Code Snippet 1.1: The Primal fork()

More often than not, this newborn child process hungers for its own destiny, a program distinct from its parent’s. This desire is fulfilled by the exec family of system calls (e.g., execve(), execl()). When an exec call is invoked, the kernel, with swift precision, incinerates the child’s current identity—its code, its data, its very stack and heap—and replaces it with the fresh, crisp image of a new program. Yet, through this metamorphosis, the process’s soul, its PID, remains steadfast, a constant identifier in a changing world.

Process Lifecycle - Orchestra

Process Lifecycle - Orchestra

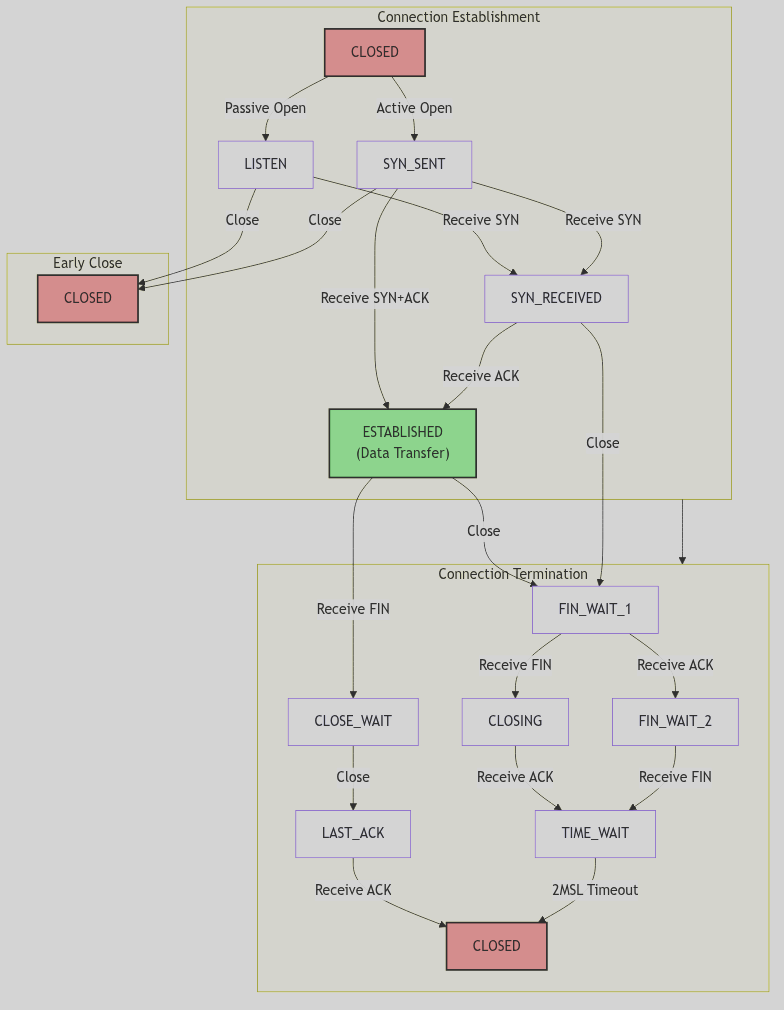

fork() vs. vfork(): A Tale of Two Births and Kernel Optimization

But SVR4, ever the pragmatist, offered a sibling to fork(): the enigmatic vfork(). This wasn’t merely a naming convention; it was an optimization born from the austere memory landscapes of 1988. Unlike its memory-duplicating cousin, vfork() was a gambit. The child, rather than receiving its own pristine copy of the parent’s address space, was instead granted temporary stewardship within the parent’s very own address space.

The parent, a benevolent but watchful guardian, would then pause, suspended in time, awaiting the child’s crucial next move: either an exec() to shed its shared skin and embrace its own program, or a swift _exit() to gracefully depart. This daring optimization elegantly sidestepped the heavy toll of copying an entire address space, a true boon when an exec() was imminent.

However, like any daring maneuver, vfork() came with its own perilous tightrope walk. Should the child, in its shared dominion, dare to modify the parent’s address space before its inevitable exec(), chaos could ensue. The kernel, ever vigilant, enforces this strict contract to prevent a child’s nascent scribbles from corrupting the parent’s pristine canvas.

The Ghost of SVR4: Memory Constraints of Yore

In the dimly lit server rooms of 1988, memory was a precious commodity, measured in megabytes rather than gigabytes. The full duplication performed by

fork()—especially for large processes—could be a performance bottleneck.vfork()was a clever, if slightly perilous, solution to mitigate this. It exploited the commonfork()-then-exec()pattern, avoiding an expensive copy that would immediately be discarded.Modern Contrast (2026): Modern

fork()on Linux uses Copy-On-Write: parent and child remain runnable and only incur page copies on write.vfork()still exists, but it retains its historical contract by suspending the parent until the child callsexec()or_exit(). The optimization is now mostly transparent, and the explicitvfork()dance is used sparingly, but the distinction remains: COWfork()preserves parallelism, whilevfork()trades it for speed.

The u-Block: A Glimpse into a Process’s Soul (SVR4 Edition)

Central to the SVR4 process’s identity, beyond its PID, was the venerable User Area, affectionately known as the u-block. This kernel-resident data structure, unique to each process, was a veritable treasure trove of context. It housed the process’s kernel stack, its per-process open file table, signal handling information, error numbers, and a myriad of other critical runtime details. In SVR4 terms, the u-block and the proc table entry together serve as the Process Control Block (PCB): the hardware context lives in the u-block, while system-wide identity and state live in proc.

/* Excerpted fields from struct user (sys/user.h) */

typedef struct user {

char u_stack[KSTKSZ]; /* kernel stack */

char u_stack_filler_1[2];

char u_fpvalid; /* fp state valid */

char u_weitek; /* uses weitek */

struct tss386 *u_tss; /* pointer to user TSS */

ushort u_sztss; /* size of tss */

ulong u_sub; /* stack upper bound */

proc_t *u_procp; /* pointer to proc structure */

int u_arg[MAXSYSARGS]; /* args to current syscall */

label_t u_qsav; /* longjmp label */

char u_error; /* return error code */

struct rlimit u_rlimit[RLIM_NLIMITS]; /* resource limits */

k_sigset_t u_sigonstack; /* signals on alt stack */

k_sigset_t u_sigrestart; /* restart syscalls */

k_sigset_t u_sigmask[MAXSIG]; /* signals held in catcher */

void (*u_signal[MAXSIG])(); /* dispositions */

} user_t;

Code Snippet 1.2: The Intimate u_block (Excerpted)

The Ghost of SVR4: The u-block’s Legacy

The u-block was a cornerstone of SVR4 process management, a compact and efficient way to store per-process kernel context. Its design reflected a time when every byte of memory was carefully accounted for. It served as a critical bridge between the generic

proctable entry (which held system-wide process information) and the specific, rapidly changing context of a process’s kernel execution.Modern Contrast (2026): In contemporary Linux, the concept of a monolithic u-block has evolved. Its responsibilities are now distributed across various fields within the comprehensive

struct task_structand related data structures. While thetask_structin Linux is far more extensive and integrated, the spirit of the u-block—that direct, intimate connection to a process’s ephemeral state—is still palpable within the modern kernel’s design. The SVR4 u-block stands as an elegant predecessor, showing how fundamental information was once encapsulated.

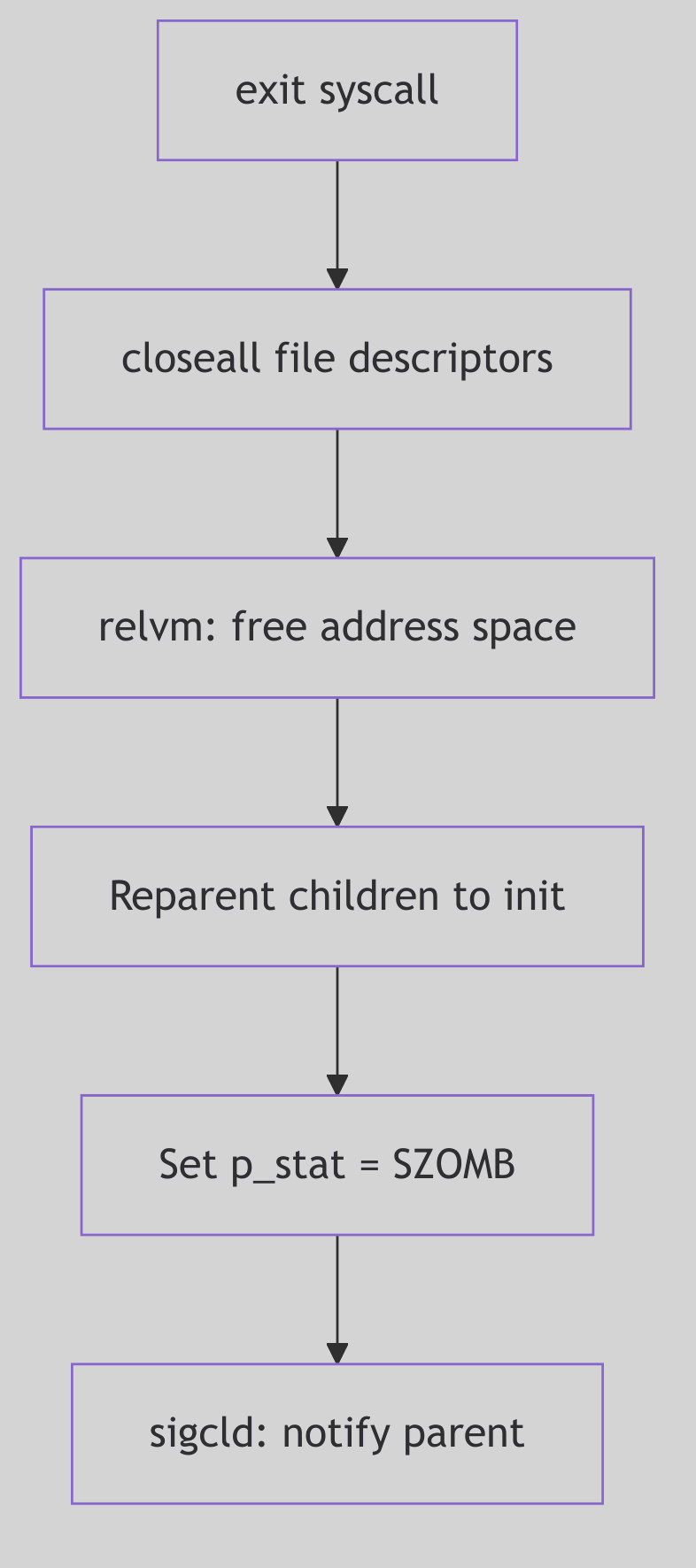

The Final Curtain: Process Termination

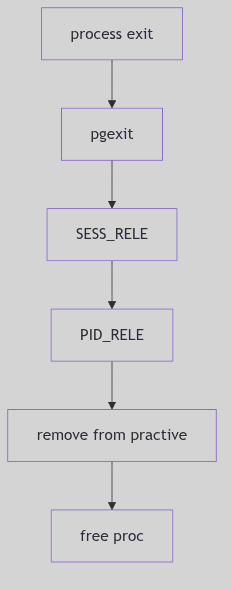

Figure 1.1.2: Process Exit and Termination

Figure 1.1.2: Process Exit and Termination

Alas, even the most vibrant process must, at some juncture, meet its cessation. This can occur either by graceful self-annihilation or by an unforeseen, often forceful, intervention.

-

Voluntary Departure: A process, having fulfilled its purpose, may choose to exit gracefully by invoking

exit()or_exit(). The_exit()call, a more direct route, bypasses the user-space cleanup routines (likeatexit()handlers orstdiobuffer flushing), making it suitable for children destined for an immediateexec()or for robust error handling where user-space state is untrustworthy. Returning from themain()function in C is, in essence, a call toexit(). -

Involuntary Eviction: The kernel, or another process, can forcibly terminate a process, most often through the delivery of a signal. A

SIGKILL, for instance, is the ultimate, unblockable eviction notice, while aSIGSEGV(segmentation fault) marks a catastrophic misstep in memory access, compelling the kernel to intervene.

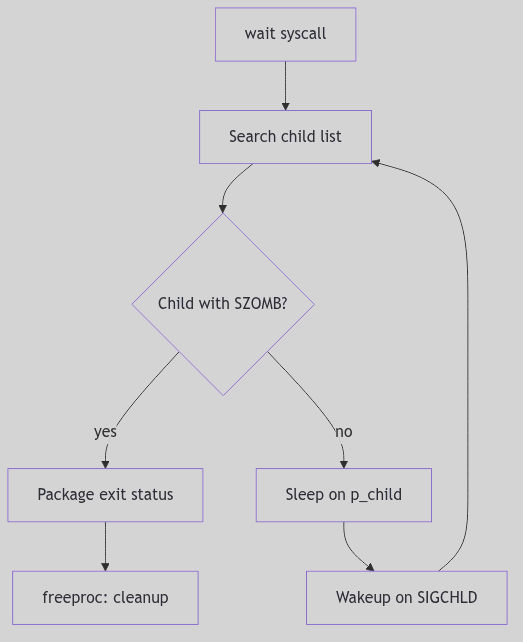

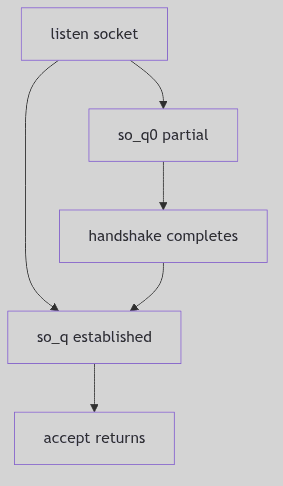

Upon termination, a process does not vanish instantaneously. Instead, it lingers as a spectral “zombie” process. In this enigmatic state, its computational essence is gone, its resources largely reclaimed by the kernel. Yet, a vestige remains: its entry in the kernel’s Process Table and its u-block/PCB context, preserved long enough to hold the exit status. This spectral existence serves a vital purpose: it allows the parent process, through the wait() or waitpid() system calls, to collect the child’s final report—its exit status—and only then does the kernel fully expunge the zombie, “reaping” its last kernel resources.

Should a parent process prematurely depart this digital realm before its children, those orphaned processes are not left to wander the wilderness. Instead, they are nobly adopted by the venerable init process (always PID 1), the primordial ancestor of all user-space processes. init, the steadfast caretaker, assumes the responsibility of patiently wait()ing for these adopted children, dutifully reaping their zombie forms when their time comes. This ensures that no process lingers eternally, hogging precious Process Table entries.

The Ghost of SVR4: The Importance of Reaping

Unreaped zombie processes, while consuming minimal resources, can exhaust the finite number of entries in the kernel’s Process Table. In the SVR4 era, this was a tangible risk, capable of leading to system instability by preventing new processes from being created. The

wait()family of calls wasn’t just good practice; it was a necessary ritual to maintain kernel hygiene.Modern Contrast (2026): While modern kernels have larger process tables, the principle remains. Zombie processes, if accumulated in large numbers, can still signify application bugs (e.g., parent processes not

wait()ing for their children) and can indeed consume resources, albeit mostly Process Table entries, which are still finite. Theinitadoption mechanism remains a cornerstone of UNIX-like systems, a testament to the foresight of early designers.

The Dance of Existence: Process States

Throughout its ephemeral existence, a process whirls through a ballet of states, each signifying its current relationship with the CPU and the kernel’s resources. The SVR4 kernel, with its meticulous oversight, maintains a Process Table—a grand ledger of proc structures, each a detailed dossier on a single process. This proc structure is the central repository, containing its PID, its current state, its security credentials, and a web of pointers to other critical kernel data, such as our familiar u-block.

The primary states in this grand choreography include:

- Running: The process is, at this very instant, clutching the CPU, executing its instructions with fervor.

- Ready (Runnable): Poised and eager, the process is perfectly capable of running but is momentarily sidelined, patiently awaiting its turn on the CPU, a hopeful contender in the scheduler’s queue.

- Sleeping (Waiting): The process has temporarily retired from active computation, having voluntarily surrendered the CPU. It slumbers, awaiting a specific event—perhaps the completion of an I/O operation, the arrival of a signal, or the tick of a timer. It’s in a state of suspended animation, ready to awaken when its desired event materializes.

- Zombie: As discussed, this is the spectral aftermath of a terminated process, awaiting its parent’s final rites of

wait()orwaitpid(). - Stopped: A process temporarily frozen in time, usually by an external force—a

SIGSTOPorSIGTSTPsignal—often seen in the graceful mechanics of shell job control. It can be resumed by aSIGCONTsignal.

The Ghost of SVR4: Process Groups and Sessions for Order

The SVR4 kernel introduced sophisticated mechanisms like process groups and sessions not merely as abstract concepts, but as fundamental tools for imposing order on the chaos of multiple processes.

Process Groups are collections of related processes, typically created by a shell pipeline (e.g.,

command1 | command2). Signals, such asSIGINT(Ctrl+C), are often delivered to an entire process group, allowing for collective control. This was crucial for shell job control, enabling a user to suspend (SIGTSTP), resume (SIGCONT), or terminate an entire pipeline of commands with a single keystroke.Sessions elevate this concept further, encapsulating one or more process groups. A session typically corresponds to a login session or a terminal, acting as an insulating layer. When a terminal closes, a

SIGHUPsignal is often sent to the session leader, which can then propagate it to its process groups, gracefully terminating the applications associated with that session. This structured hierarchy was vital for managing interactive user environments and background jobs reliably.

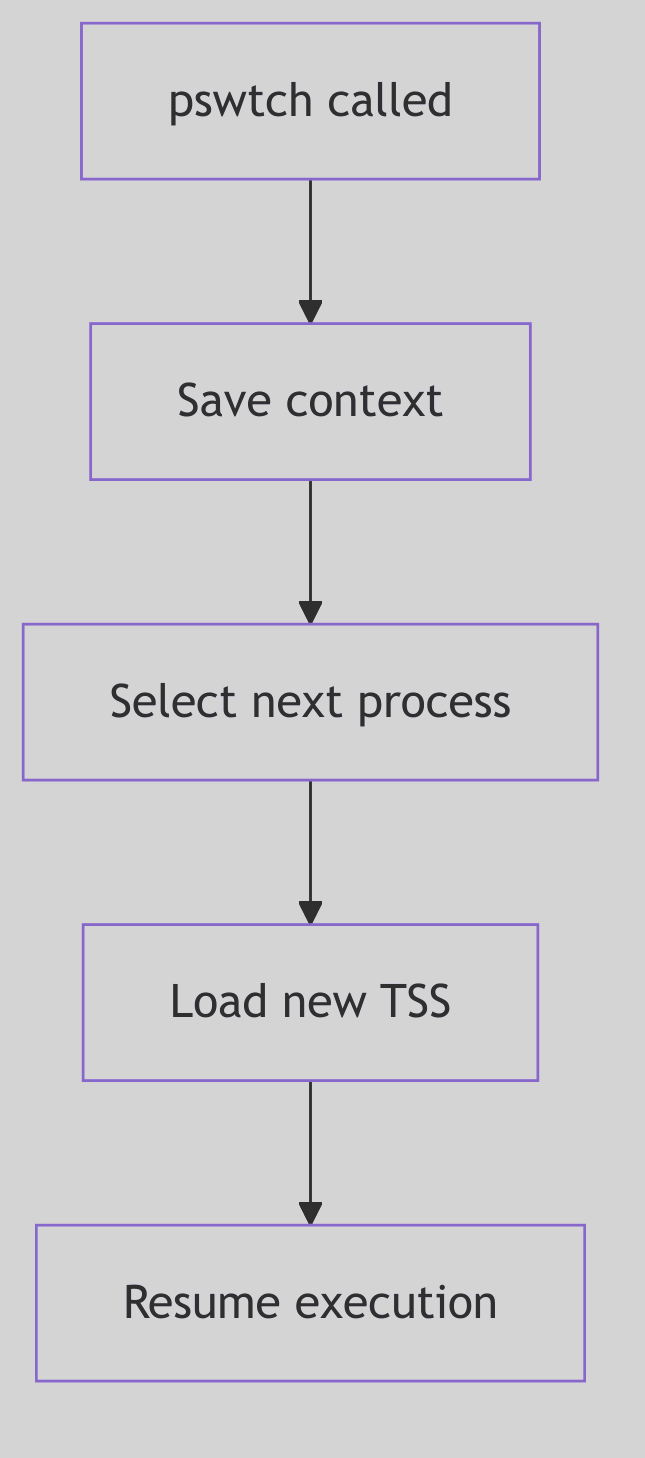

The Kernel’s Hand-Off: Context Switching

The very illusion of concurrency—of many processes seemingly running simultaneously on a single CPU—is conjured by the kernel’s exquisite mastery of context switching. This is the heart of multitasking: the instantaneous, surgical act of preserving the entire operational state of the currently executing process, and then, with equal precision, reinstating the state of another.

When the scheduler, having made its momentous decision, dictates a change, the kernel embarks on a critical, low-level ballet. The CPU’s fleeting memories are meticulously archived:

- General-Purpose Registers: The immediate workspace of the CPU, holding operands and results.

- Program Counter (Instruction Pointer): The CPU’s bookmark, indicating the next instruction to execute.

- Stack Pointer: The current top of the process’s stack.

- Process Status Word (PSW): A register reflecting the CPU’s current operational mode, flags, and interrupt status.

- Memory Management Unit (MMU) State: Crucially, the base register pointing to the process’s page tables (e.g.,

CR3on x86), which defines its unique virtual address space.

This meticulously saved “context” is stored within the process’s proc structure (or its u-block, or a combination thereof), a snapshot awaiting the process’s eventual return to the CPU.

At the core of this resurrection, particularly in SVR4, lies the often-unsung hero: the resume() function.

The resume() Function: Awakening a Slumbering Giant

The resume() function, a highly optimized, architecture-dependent assembly routine, is the incantation that breathes life back into a scheduled process. It is the final, atomic act of the context switch. Its mission: to load the previously saved CPU state of the chosen newproc into the physical CPU registers. On i386 this logic lives in ml/misc.s (ml/misc.s:1339-1370).

In practice, resume() performs the inverse operation of context saving:

- It receives pointers to the

procstructures (or their relevant context saving areas) of theoldproc(the process being switched from) andnewproc(the process being switched to) to save the current CPU state (registers, stack pointer, program counter) ofoldproc. - It updates the kernel’s internal pointers to reflect the currently executing process.

- Crucially, it restores the saved CPU state of

newproc, including itsCR3register to point tonewproc’s page tables, effectively switching the active virtual address space. - It then “returns” into the

newproc’s context, making it appear as ifnewprocwas simply suspended and is now continuing from where it left off.

// Schematic outline for resume() (x86 specific)

resume(oldproc, newproc):

; Save oldproc's context (often done by the caller or an interrupt handler)

; ...

; Switch current process pointers (conceptual)

mov EAX, newproc_ptr

mov [current_proc_ptr], EAX ; Update global current process pointer

; Restore newproc's MMU context (CR3 register)

mov EAX, [newproc_page_table_base]

mov CR3, EAX ; This is where the virtual address space magic happens!

; Restore newproc's general purpose registers, stack pointer, and instruction pointer

pop EBP

pop ESI

pop EDI

pop EDX

pop ECX

pop EBX

pop EAX

mov ESP, [newproc_kernel_stack_pointer] ; Load new kernel stack

; Finally, return to the new process's execution flow

ret_from_interrupt_or_call ; Jumps to newproc's saved EIP/CS

Code Snippet 1.3: The resume() Function’s Orchestration (Schematic)

The resume() function is an exquisite piece of engineering, often residing at the very boundary between C code and the raw power of assembly. It operates with surgical precision, ensuring that the transition between processes is seamless and efficient, a blink-and-you-miss-it moment that underpins the entire multiprocessing paradigm.

Figure 1.1.3: Wait and Zombie Reaping

Figure 1.1.3: Wait and Zombie Reaping

Process Scheduling

The Time-Keeper’s Art: Process Scheduling

Having explored the fascinating genesis and eventual cessation of a process in SVR4, let us now turn our gaze to the ballet master itself: the Process Scheduler. This unsung hero within the kernel is the ultimate arbiter of CPU time, meticulously deciding which hungry contender gets to taste the precious silicon next. In a world teeming with processes, each clamoring for attention, the scheduler’s role is akin to a benevolent, yet firm, traffic controller, ensuring that the CPU’s finite resources are distributed with purpose, policy, and a touch of SVR4 elegance.

The Grand Conductor: SVR4 Scheduling Classes

SVR4, with its characteristic architectural foresight, did not merely present a monolithic scheduling policy. Instead, it unveiled a highly flexible, multi-tiered framework—the Scheduling Classes. This innovative design allowed the kernel to employ distinct algorithms tailored to the very nature of the processes they governed. No longer would a time-critical sensor process be treated with the same casual indifference as a background compilation job. Each had its class, its policy, and its priority.

The primary dramatis personae in this scheduling theater were:

-

Real-Time (RT): These are the prima donnas of the kernel, demanding and receiving the highest accolades of priority. An RT process, once runnable, seizes the CPU with an almost tyrannical grip, running uninterrupted until it either voluntarily yields (blocks) or explicitly steps aside. Their existence is predicated on absolute predictability, making them indispensable for applications where temporal guarantees are not merely desirable, but mission-critical—think industrial control systems or specialized multimedia streams.

-

System (SYS): The backbone of the kernel itself, this class is reserved for the essential gears and levers of the operating system. Core kernel threads, interrupt handlers, and critical system daemons reside here. SYS processes operate at fixed, elevated priorities, ensuring that the kernel, the very heart of the system, remains responsive, vigilant, and unburdened by the whims of user-space frivolity.

-

Time-Sharing (TS): This is the bustling marketplace of user processes, the common folk of the SVR4 kingdom. TS processes are scheduled with an emphasis on fairness and equitable CPU distribution. They dance to a round-robin tune, each receiving a quantum of CPU time (a “time slice”), after which they are politely, but firmly, ushered back into the run queue. Their priorities are not static decrees but dynamic whispers, constantly adjusted by the kernel’s keen observation of their CPU appetites and periods of rest. A process that has recently been CPU-hungry might find its priority gently nudged downwards, while one that has patiently slept might receive a boost, ensuring that no single process hoards the CPU indefinitely.

The Ghost of SVR4: The Genesis of Modular Scheduling

The SVR4 scheduling classes were a remarkably prescient design choice in their era. Before this, many UNIX-like systems employed simpler, more monolithic scheduling algorithms that struggled to balance the demands of real-time responsiveness with general-purpose time-sharing. The modularity introduced by SVR4 allowed for greater flexibility and extensibility, foreshadowing the plugin-based scheduling architectures seen in modern kernels.

Modern Contrast (2026): Modern Linux kernels, while not explicitly using the “SVR4 classes” nomenclature, adopt similar multi-policy approaches. The Completely Fair Scheduler (CFS) handles general time-sharing, while

SCHED_FIFOandSCHED_RRcater to real-time needs, and aSCHED_DEADLINEclass provides even stronger temporal guarantees. The SVR4 design laid crucial groundwork for separating policy from mechanism in kernel scheduling, a principle that endures to this day.

Process Scheduling - Restaurant Kitchen

Process Scheduling - Restaurant Kitchen

The SVR4 Scheduler: A Priority-Driven Maestro

At the operational core of the SVR4 scheduler lies a finely tuned priority system, managed through a series of run queues. Imagine a grand antechamber with many doors, each labeled with a priority level within a specific scheduling class. When a process becomes runnable—awakening from a sleep, or finishing an I/O operation—it is meticulously placed onto its appropriate run queue.

When a CPU, that insatiable hunger for instructions, becomes available (perhaps because a running process decided to block, or its allotted time slice expired), the scheduler springs into action. Its directive is absolute: always select the highest-priority runnable process from the highest-priority non-empty scheduling class.

Within the Time-Sharing class, this mechanism becomes particularly nuanced. The scheduler isn’t merely a static gatekeeper; it’s an active observer. Processes that tirelessly grind away, consuming vast tracts of CPU time, will find their dynamic priorities gently, but persistently, decreasing. Conversely, those processes that have patiently waited, perhaps slumbering for an I/O event, will experience a compensatory increase in their priority. This astute mechanism, often termed “priority aging”, is the scheduler’s subtle art of preventing CPU starvation, ensuring that even the most humble Time-Sharing process will eventually receive its moment in the silicon sun.

The Interruption: Preemption

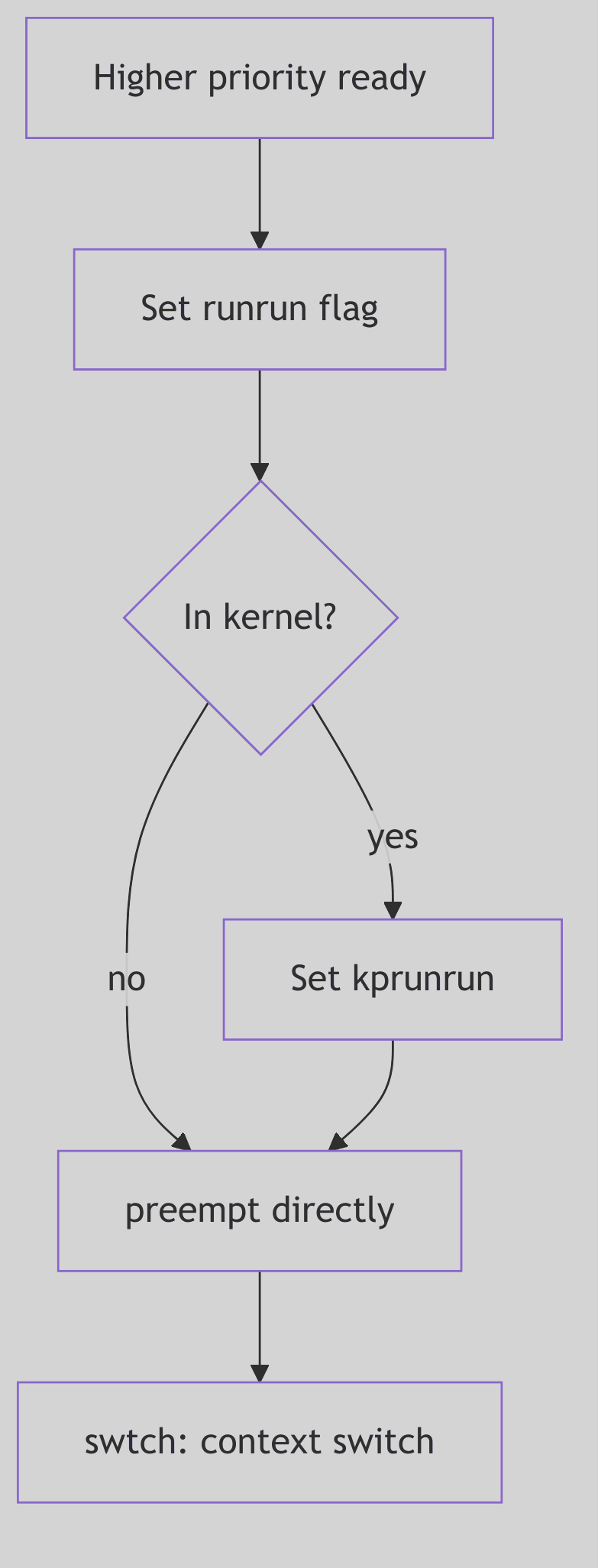

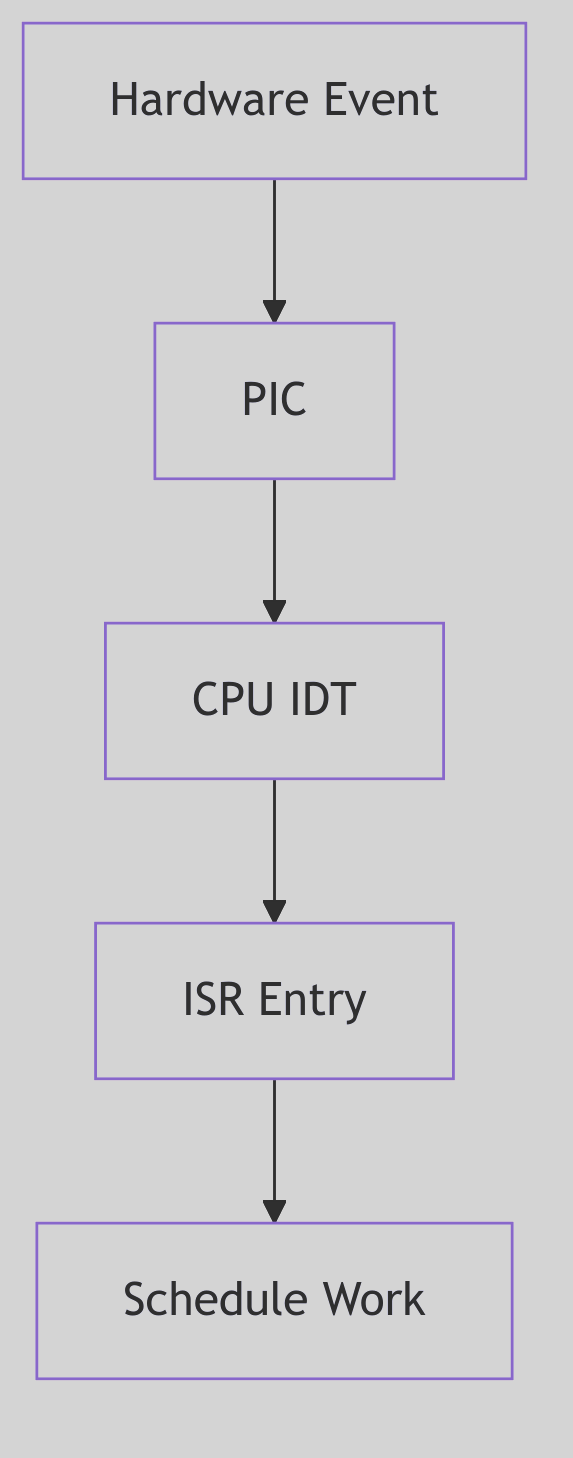

Figure 1.2.1: Preemption Flow

Figure 1.2.1: Preemption Flow

SVR4, a marvel of its time, was a preemptive kernel. This means that the CPU’s current tenant—the running process—could be summarily interrupted and forced to relinquish its hold, even if it hadn’t completed its quantum of time or explicitly paused. Preemption is the very essence of responsive multitasking, ensuring that critical events or higher-priority demands are never ignored.

Preemption could be triggered by several compelling forces:

- The Ascent of a Monarch: Should a process of unequivocally higher priority (an

RTprocess, for example) suddenly become runnable, the currently executing, lower-priority process is immediately—and without ceremony—ejected from the CPU. The new monarch reigns supreme. - The Tyranny of the Time Slice: For

Time-Sharingprocesses, the CPU is not an endless buffet but a carefully rationed meal. Once a process consumes its entire allotted time slice, the scheduler, with clockwork precision, preempts it. The process is then returned to the run queue, often with a subtly adjusted (read: reduced) priority, awaiting its next turn. - The Call of the Hardware: External interrupts, those urgent cries from the hardware (a disk signaling completion, a network packet arriving), can also trigger preemption. The CPU immediately diverts its attention to the interrupt handler. Upon completion, the scheduler re-evaluates the landscape, often leading to a context switch if a higher-priority task is now runnable.

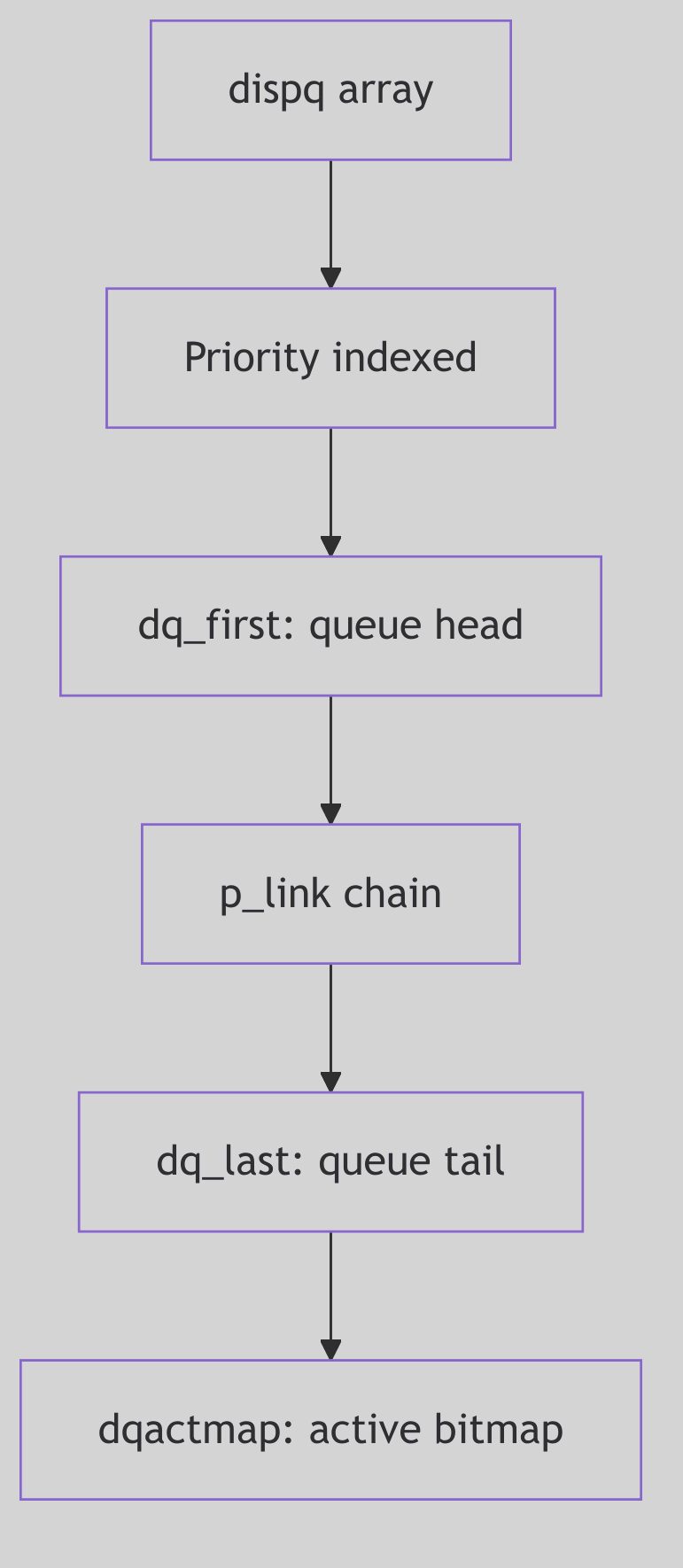

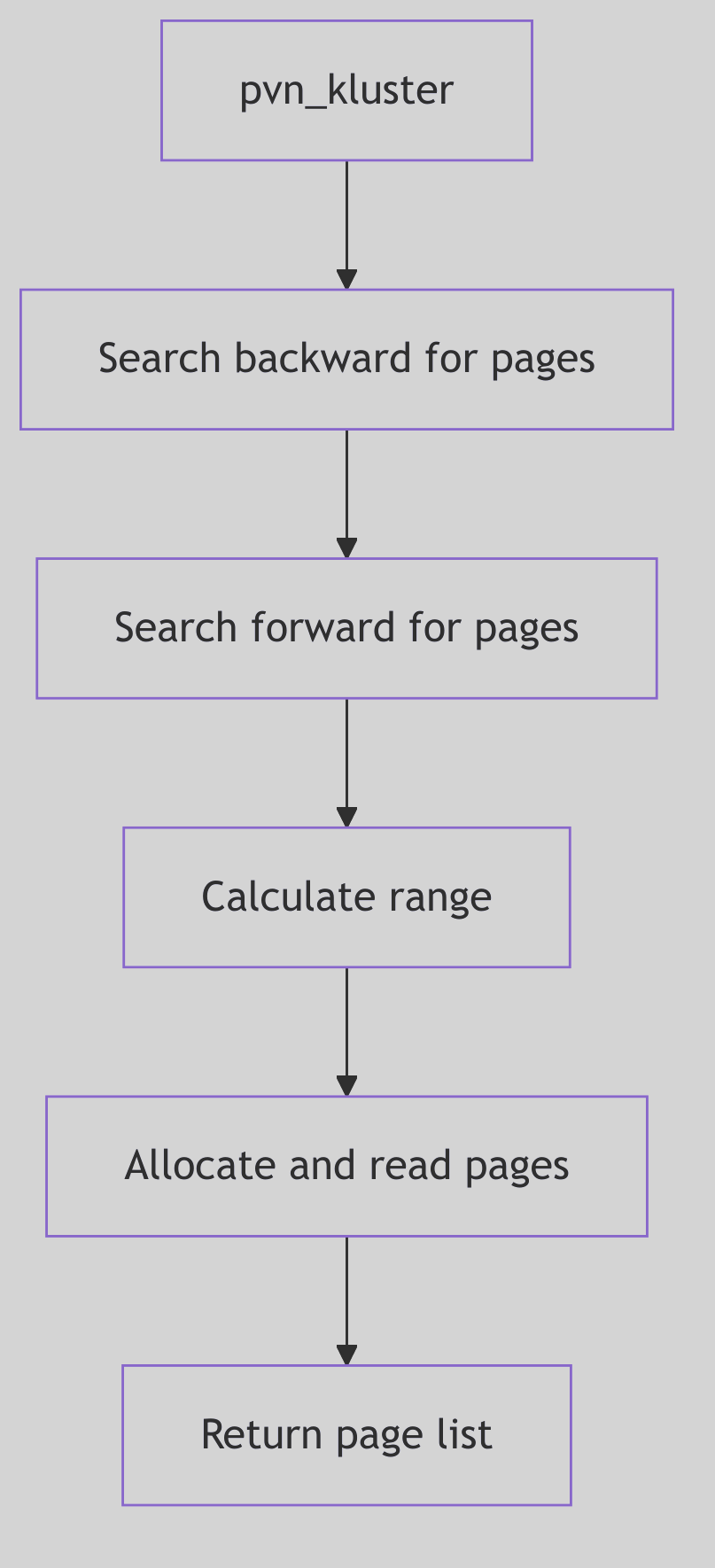

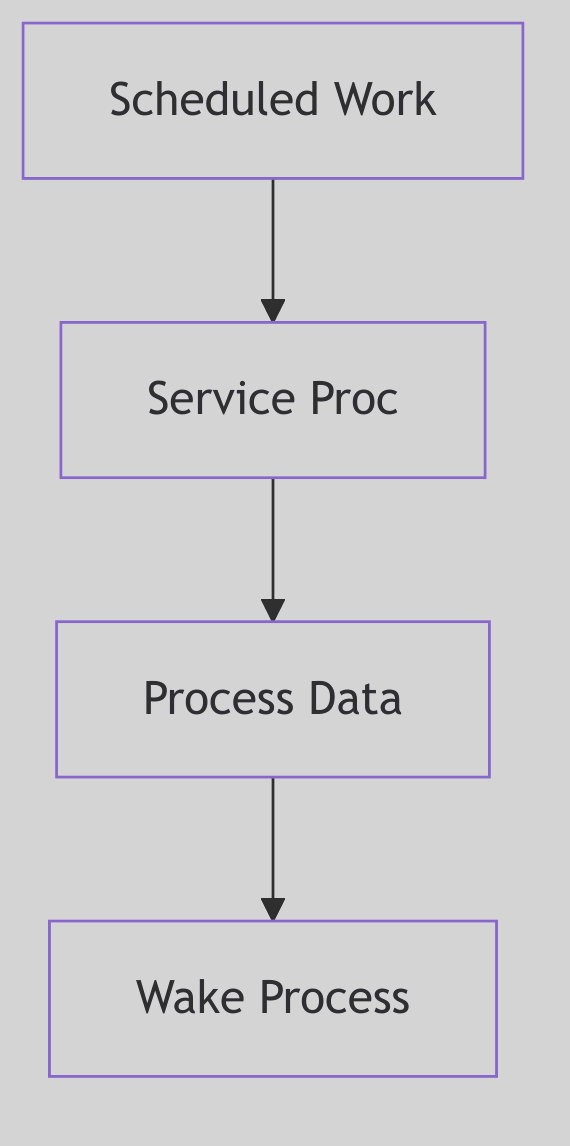

Dispatch Queues: The Scheduler’s Ledger

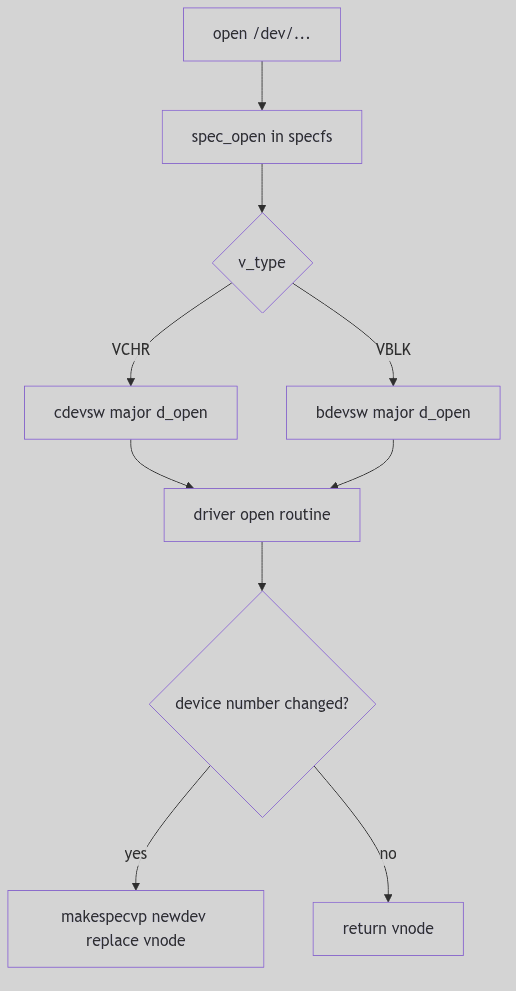

Figure 1.2.2: Dispatch Queues

Figure 1.2.2: Dispatch Queues

To manage this dynamic ebb and flow of processes, the SVR4 kernel employs dispatch queues. In practice, these are explicit data structures, often linked lists, each corresponding to a distinct priority level. When a process transitions from a blocked state to a runnable state, it is meticulously inserted into the appropriate dispatch queue for its current priority.

The scheduler’s central loop, a relentless cycle of decision and execution, can be distilled into these fundamental steps:

- Survey the Landscape: Continuously examine the dispatch queues for any runnable processes.

- Identify the Chosen One: From the highest-priority non-empty dispatch queue, pluck the next process slated for execution.

- The Great Switch: Initiate a context switch to the selected process, involving the intricate dance of saving the old state and restoring the new state, as we discussed with the

resume()function. - Repeat Ad Infinitum: The cycle perpetuates, an eternal vigil ensuring the CPU is always serving the highest demand.

This sophisticated yet elegant dispatching mechanism is how SVR4 maintained both responsiveness for critical tasks and fairness for general computation, a testament to the enduring principles of efficient operating system design.

Figure 1.2.3: Context Switch

Figure 1.2.3: Context Switch

Signal Handling

Whispers from the Kernel: The Art of Signal Handling

In the cacophony of a busy kernel, where processes dance to the CPU’s tune, there exists a delicate system of asynchronous communication: signals. These are not polite invitations, but urgent whispers, sharp nudges, or even outright shouts from the kernel, hardware, or other processes, designed to notify a process of an event that demands its attention. From an illegal memory access to the tap on the shoulder from a user pressing Ctrl+C, signals are the kernel’s primitive yet powerful mechanism for event-driven interaction. To truly master SVR4, one must understand this “dark art”—how signals are born, how they travel, and how a process, or indeed the kernel, chooses to respond.

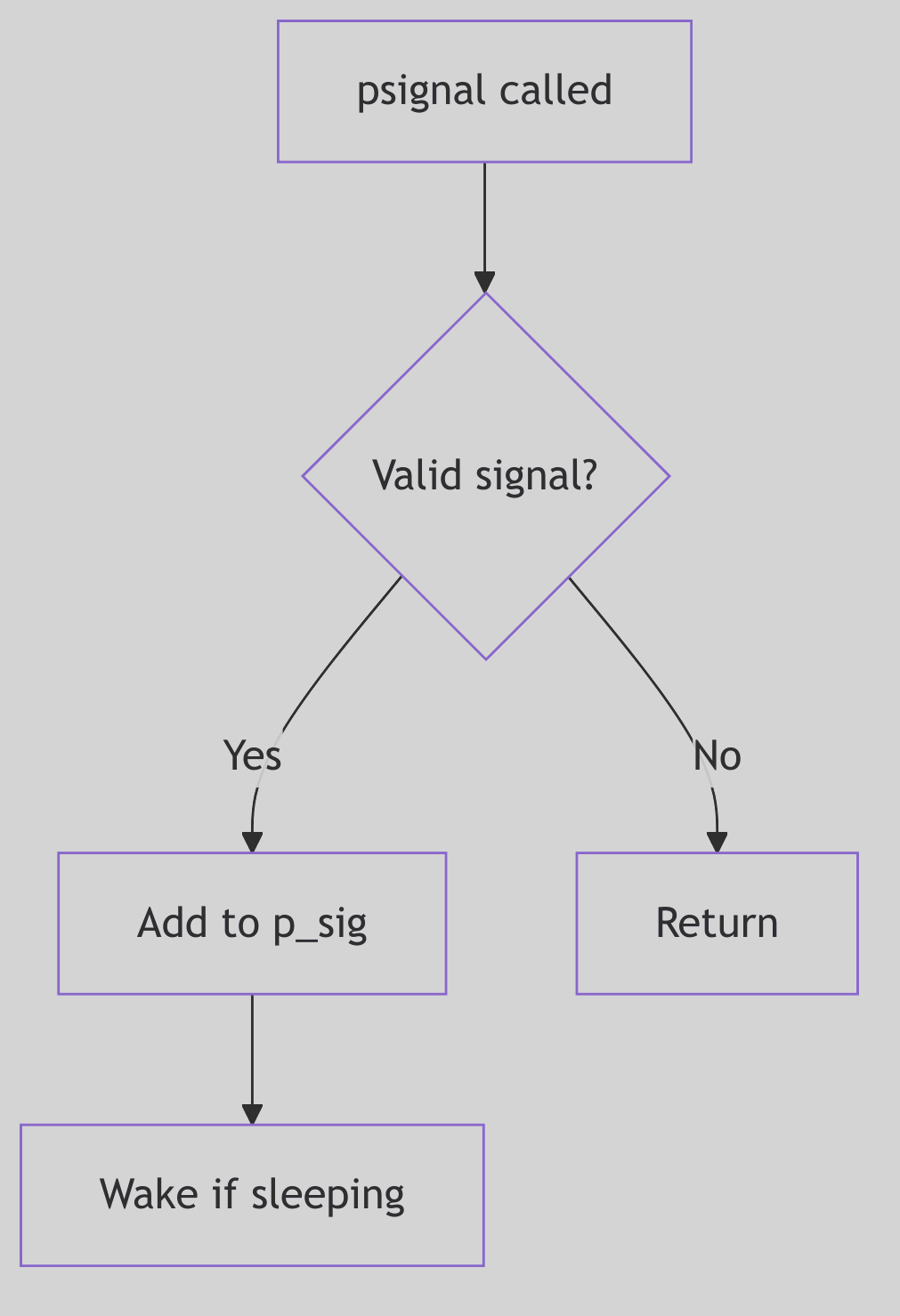

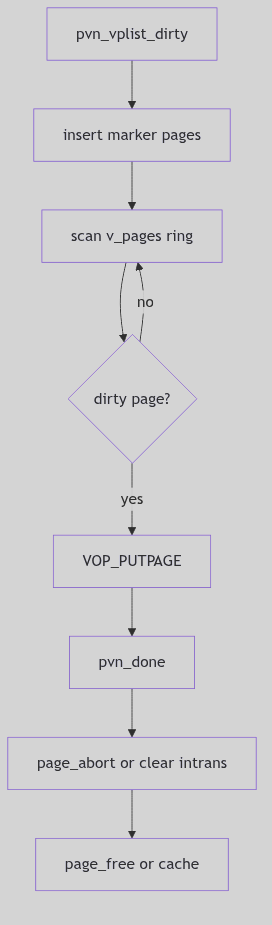

The Genesis of a Whisper: Signal Posting

Figure 1.3.1: Signal Posting

Figure 1.3.1: Signal Posting

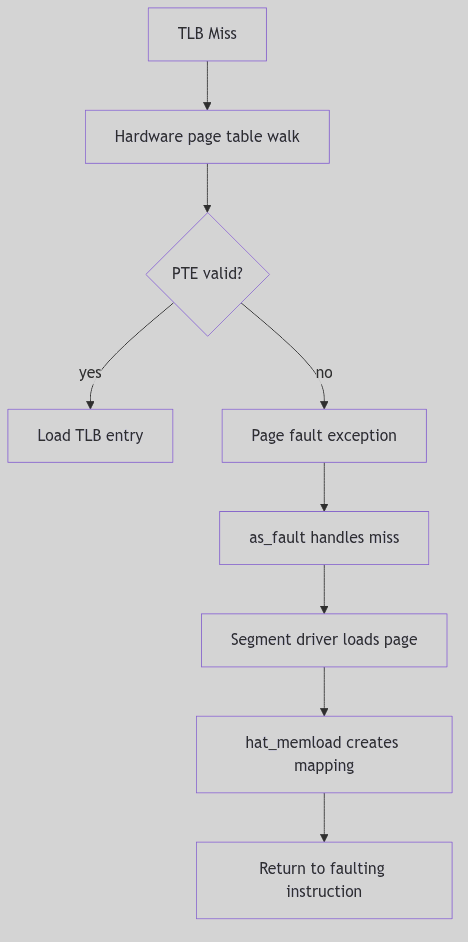

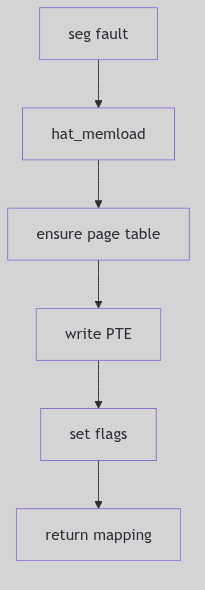

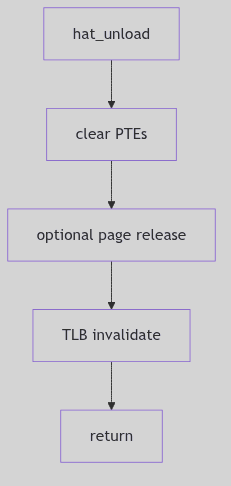

A signal’s journey begins with its posting. This is the act of marking a target process as having a pending signal. Whether triggered by a hardware fault (like a SIGSEGV), a user’s command (kill -9 PID), or a kernel event (like a child process terminating, sending a SIGCHLD), the kernel’s psignal() function (often starting its work around sig.c:103 in the SVR4 source) acts as the signal’s dispatcher.

The dispatch mechanism is surprisingly nuanced, especially for job control signals, which carry specific implications for process states. For instance, a SIGCONT (continue signal) is a powerful command:

/* Excerpt from sigtoproc() in sig.c:112-169 */

if (sig == SIGCONT) {

if (p->p_sig & sigmask(SIGSTOP))

sigdelq(p, SIGSTOP);

if (p->p_sig & sigmask(SIGTSTP))

sigdelq(p, SIGTSTP);

if (p->p_sig & sigmask(SIGTTOU))

sigdelq(p, SIGTTOU);

if (p->p_sig & sigmask(SIGTTIN))

sigdelq(p, SIGTTIN);

sigdiffset(&p->p_sig, &stopdefault);

if (p->p_stat == SSTOP && p->p_whystop == PR_JOBCONTROL) {

p->p_flag |= SXSTART;

setrun(p);

}

} else if (sigismember(&stopdefault, sig)) {

sigdelq(p, SIGCONT);

sigdelset(&p->p_sig, SIGCONT);

}

if (!tracing(p, sig) && sigismember(&p->p_ignore, sig))

return;

sigaddset(&p->p_sig, sig);

if (p->p_stat == SSLEEP) {

if ((p->p_flag & SNWAKE)

|| (sigismember(&p->p_hold, sig) && !EV_ISTRAP(p)))

return;

setrun(p);

} else if (p->p_stat == SSTOP) {

if (sig == SIGKILL) {

p->p_flag |= SXSTART|SPSTART;

setrun(p);

} else if (p->p_wchan && ((p->p_flag & SNWAKE) == 0))

unsleep(p);

} else if (p == curproc) {

u.u_sigevpend = 1;

}

Code Snippet 1.4: Job Control Signal Posting Logic (Excerpt)

This snippet illustrates the delicate dance between SIGCONT and stop signals (SIGSTOP, SIGTSTP, etc.). Posting a SIGCONT implicitly cancels any pending stop signals and, crucially, can awaken a process that was previously SSTOP (stopped). Conversely, attempting to stop a process will clear any pending SIGCONT. This mutual exclusivity is the kernel’s way of enforcing consistent job control semantics, preventing paradoxical states.

Once these job control nuances are handled, the signal is added to the process’s pending signal set (p->p_sig). If the target process is currently in an interruptible sleep (SSLEEP without the SNWAKE flag, meaning it can be awakened by a signal), the setrun() function is invoked to stir it from its slumber, making it runnable. However, the venerable SIGKILL is a brute-force exception: it will always awaken even a SSTOP process, as termination is its ultimate, undeniable decree.

For the currently executing process, a special flag (u.u_sigevpend) is set. This ensures that any signals posted to self (even from an interrupt context) are noticed and handled before the kernel finally relinquishes control and returns to user mode. This separation of “posting” (the asynchronous event) from “delivery” (the synchronous handling upon returning to user space) is a cornerstone of UNIX signal reliability.

The Ghost of SVR4: Reliability in a Preemptive World

The careful separation of signal posting from delivery was a design marvel for its time, ensuring consistency even when signals could arrive asynchronously from various sources (hardware, other processes). This two-phase approach prevented many race conditions that could plague simpler signal implementations. The kernel explicitly waits until a safe point (return to user mode) to process signals.

Modern Contrast (2026): While the fundamental principles remain, modern kernels often employ more sophisticated, per-thread signal queues and more granular control over signal delivery to individual threads within a multi-threaded process. However, the core idea of signals as asynchronous notifications, processed at specific, safe points, traces its lineage directly back to robust designs like SVR4’s.

Signal Handling - Whispers and Nudges

Signal Handling - Whispers and Nudges

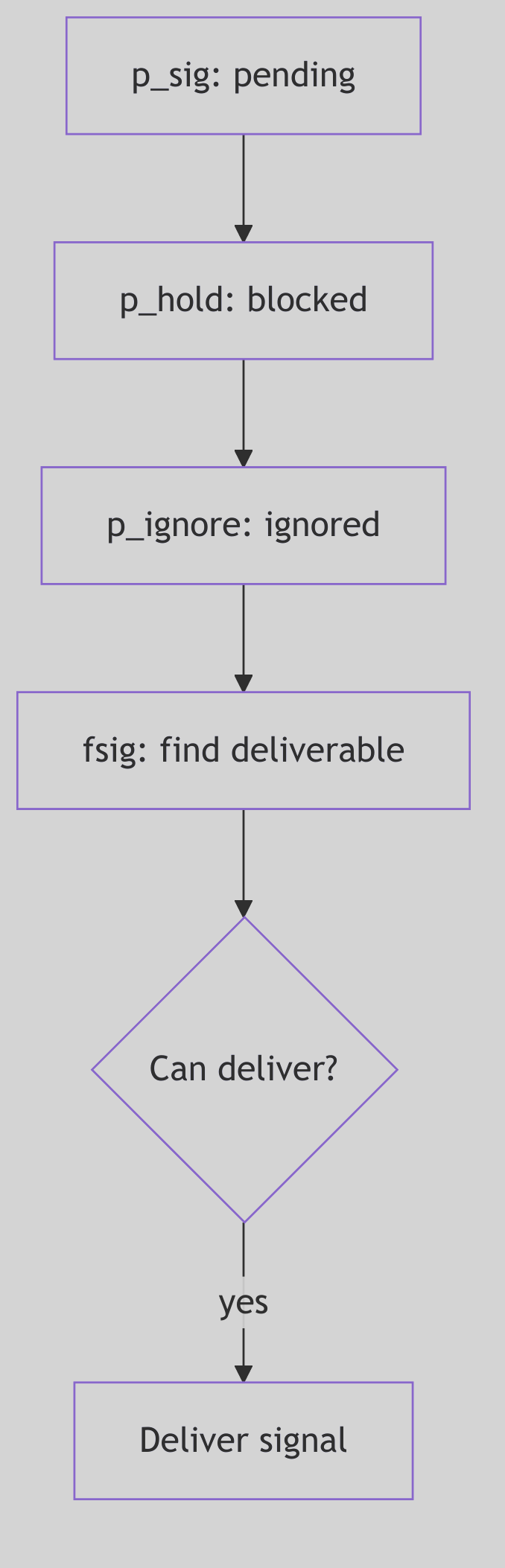

The Sentry’s Gate: Signal Masks and Sets

Figure 1.3.2: Signal Masks and Sets

Figure 1.3.2: Signal Masks and Sets

A process is not merely a passive recipient of signals; it possesses a sophisticated array of defenses and preferences, managed by the kernel through various signal bitmasks and predefined signal sets. These act as filters, allowing a process to selectively block, ignore, or trace specific signals, thereby controlling its susceptibility to external interruptions.

The kernel maintains several critical bitmasks for each process (conceptually depicted in Figure 1.3.3):

p_sig(Pending Signals): This bitmask holds the signals that have been posted to the process but have not yet been delivered. Think of it as a process’s “inbox” for incoming notifications.p_hold(Blocked Signals): Signals whose corresponding bit is set inp_holdare blocked. They will not be delivered to the process even if they are pending (p_sighas their bit set). They effectively wait inp_siguntil they are unblocked. This is crucial for critical sections of code where a process needs to avoid asynchronous interruptions.p_ignore(Ignored Signals): Signals in this set are simply discarded upon delivery. The process explicitly tells the kernel, “Don’t bother me with these; I don’t care.”p_sigmask(Traced Signals): Primarily used by debuggers, signals in this set cause the process to stop and notify its tracing parent when they are about to be delivered. This allows a debugger to intercept and potentially alter the signal’s fate.

Beyond these per-process masks, SVR4 defines several global k_sigset_t (kernel signal set type) bitmasks that encode fundamental, unchangeable behaviors (found in sig.c:72-89):

// Excerpt from sig.c:72-89 - Immutable Signal Properties

k_sigset_t cantmask = (sigmask(SIGKILL)|sigmask(SIGSTOP));

k_sigset_t cantreset = (sigmask(SIGILL)|sigmask(SIGTRAP)|sigmask(SIGPWR));

k_sigset_t stopdefault = (sigmask(SIGSTOP)|sigmask(SIGTSTP)

|sigmask(SIGTTOU)|sigmask(SIGTTIN));

k_sigset_t coredefault = (sigmask(SIGQUIT)|sigmask(SIGILL)|sigmask(SIGTRAP)

|sigmask(SIGIOT)|sigmask(SIGEMT)|sigmask(SIGFPE)

|sigmask(SIGBUS)|sigmask(SIGSEGV)|sigmask(SIGSYS)

|sigmask(SIGXCPU)|sigmask(SIGXFSZ));

Code Snippet 1.5: Predefined Kernel Signal Sets

These predefined sets are the kernel’s immutable laws regarding signals:

cantmask: This set, containingSIGKILLandSIGSTOP, represents signals that cannot be blocked, ignored, or caught.SIGKILLensures a process can always be forcibly terminated, whileSIGSTOPguarantees it can always be halted by the system. There are no safe words against these.cantreset: Signals likeSIGILL(illegal instruction) andSIGTRAP(trap instruction) cannot be reset to their default handlers bySA_RESETHAND. This prevents scenarios where a handler for a CPU exception might accidentally re-enable an infinite loop, crashing the system.stopdefault: These are the signals whose default action is to stop the process (e.g.,SIGTSTPfromCtrl+Z).coredefault: Signals in this set (e.g.,SIGSEGVfor segmentation fault,SIGQUITfor quit) will, by default, cause the process to terminate and dump a core file for post-mortem debugging.

Understanding these masks and sets is paramount, for they define the very boundaries of a process’s control over its own execution flow in the face of asynchronous events.

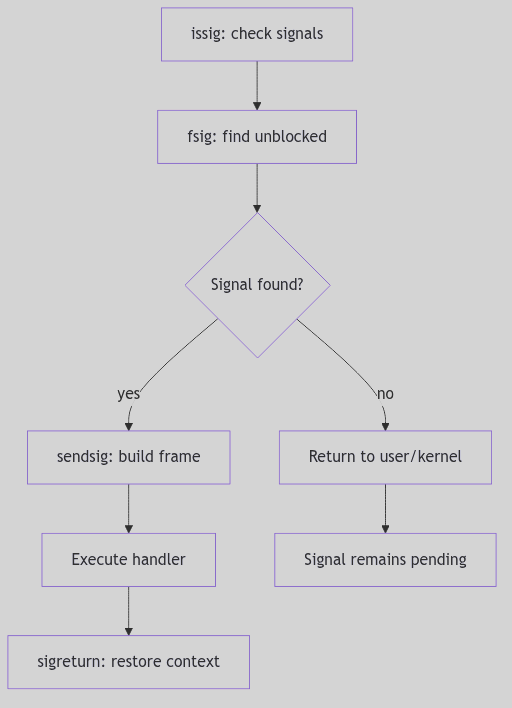

The Grand Interrogation: Signal Delivery

A signal, once posted and nestled in p_sig, does not immediately disrupt the process’s user-mode reverie. The SVR4 kernel is far more discerning. Signal delivery is typically a synchronous event, carefully orchestrated to occur at safe points—specifically, when a process is about to transition from kernel mode back into user mode. This is the moment for the issig() function (sig.c:224) to perform its grand interrogation.

Think of issig() as the gatekeeper, constantly scanning the process’s pending signals (via the fsig() helper function, which dutifully checks p->p_sig for the first unblocked, unignored signal). Its loop is relentless, ensuring no deliverable signal is overlooked:

/* Excerpt from issig() in sig.c:224-324 */

for (;;) {

if (why == FORREAL

&& (p->p_flag & SPRSTOP)

&& stop(p, PR_REQUESTED, 0, 0))

swtch();

if ((sig = p->p_cursig) != 0) {

p->p_cursig = 0;

if (why == JUSTLOOKING

|| (p->p_flag & SPTRX)

|| (!sigismember(&p->p_ignore, sig)

&& !isjobstop(sig)))

return p->p_cursig = (char)sig;

if (p->p_cursig == 0 && p->p_curinfo != NULL) {

kmem_free((caddr_t)p->p_curinfo,

sizeof(*p->p_curinfo));

p->p_curinfo = NULL;

}

continue;

}

for (;;) {

if ((sig = fsig(p)) == 0)

return 0;

if (tracing(p, sig)

|| !sigismember(&p->p_ignore, sig)) {

if (why == JUSTLOOKING)

return sig;

break;

}

sigdelset(&p->p_sig, sig);

sigdelq(p, sig);

}

sigdelset(&p->p_sig, sig);

p->p_cursig = (char)sig;

ASSERT(p->p_curinfo == NULL);

sigdeq(p, sig, &p->p_curinfo);

}

Code Snippet 1.6: The issig() Signal Delivery Loop (Excerpt)

Figure 1.3.3: Signal Delivery

Figure 1.3.3: Signal Delivery

The remaining portion handles tracing stops and debugger release (stop() and procxmt()), then loops to honor requested stops before returning (sig.c:325-360).

The issig() function follows a clear hierarchy of handling:

- Ignored Signals: If

fsig()discovers a signal that is marked inp->p_ignore, it’s summarily discarded (sigdelset,sigdelq), andissig()continues its scan, as if the signal never existed. - Traced Signals: For processes being debugged (

tracing(p, sig)), a deliverable signal causes the process to stop viastop()(sig.c:343). This notifies the tracing parent (the debugger), allowing it to inspect the process’s state and even potentially alter the signal or its action. The process enters anSSTOPstate and yields the CPU (swtch()), patiently awaiting the debugger’s command. - Job Control Stop Signals: If a

stopdefaultsignal (SIGSTOP,SIGTSTP,SIGTTIN,SIGTTOU) is found and its default action is active (i.e., not ignored or caught), theisjobstop()function intervenes (sig.c:177). This again stops the process, setting its state toSSTOP, and notifies its process group leader (and potentially the parent) viasigcld()). This is the fundamental mechanism behind shell job control, allowing you to suspend a foreground process withCtrl+Z.

Only after passing these gauntlets is a signal promoted to p_cursig, indicating it’s ready for its ultimate action.

The Process’s Reply: Signal Action and sigaction

Once issig() has crowned a signal as p_cursig, the psig() function (sig.c:420) steps in to determine the process’s final response. SVR4, adhering to the POSIX standard, provides a robust framework for specifying how a process reacts to a signal, primarily through the sigaction() system call. This system call is the process’s declaration of intent, a detailed instruction set for its signal handling strategy.

There are three fundamental actions a process can take:

-

Ignore: As discussed, if a signal is in

p->p_ignore, it’s silently discarded. This is the simplest form of dismissal. -

Default: Each signal has a predefined default action by the kernel. This can range from:

- Terminate: The process simply exits (

exit()). Signals likeSIGHUP,SIGINT,SIGTERMfall here. - Terminate and Core Dump: The process exits, but first writes an image of its memory (a “core dump”) to disk for post-mortem debugging.

SIGSEGV,SIGQUIT,SIGFPEare prime examples (members ofcoredefault). - Stop: The process suspends its execution.

SIGSTOP,SIGTSTPare the culprits here. - Ignore: A few signals, like

SIGCHLD(child status change) andSIGURG(urgent condition on a socket), are ignored by default.

- Terminate: The process simply exits (

-

Catch (User-Defined Handler): This is where a process truly asserts its control. Using

sigaction(), a process can specify a user-mode function (a “signal handler”) to be executed when a specific signal is delivered. This allows applications to gracefully respond to events, such as saving state before termination (SIGTERM) or resetting broken network connections.

The sigaction structure is the heart of this customization (sys/signal.h:78-87):

struct sigaction {

int sa_flags;

void (*sa_handler)();

sigset_t sa_mask;

int sa_resv[2];

};

Code Snippet 1.7: The sigaction Structure (sys/signal.h)

When a user-defined handler is invoked (sendsig() in sig.c:467), the kernel performs a meticulous setup:

-

Blocking Signals (

sa_mask,SA_NODEFER): Thesa_maskin thesigactionstructure specifies a set of signals to be blocked (added top_hold) for the duration of the handler’s execution. This prevents these signals from interrupting the handler itself, ensuring its atomic execution. Crucially, the signal currently being handled is automatically blocked to prevent reentrant delivery unless theSA_NODEFERflag is set. -

One-Shot Handlers (

SA_RESETHAND): If theSA_RESETHANDflag is set, the kernel automatically resets the signal’s disposition toSIG_DFL(default) before invoking the handler. This creates a “one-shot” handler that only executes once, after which the signal reverts to its default behavior. -

The Signal Frame (

sendsig()): This is where the kernel works its magic at the boundary of kernel and user space. Thesendsig()function (which is typically architecture-specific, meaning it differs for i386 vs. SPARC) meticulously constructs a “signal frame” on the user’s stack. This frame is a temporary data structure containing:- The signal number.

- Extended signal information (

siginfo_t, ifSA_SIGINFOwas specified). - A snapshot of the process’s user-mode CPU context (

ucontext_tor equivalent, including registers, program counter, stack pointer). - The return address, carefully set to point to the user-defined signal handler.

The kernel then modifies the process’s saved CPU state (the one that would normally be restored upon return from kernel mode) to make it appear as if the process had called its own signal handler. When the kernel returns to user mode, instead of resuming the interrupted code, the CPU jumps directly to the signal handler.

When the user-defined signal handler completes its execution, it does not typically return using a standard ret instruction. Instead, it must invoke the sigreturn() system call. This specialized system call is the handler’s graceful exit. sigreturn()’s sole purpose is to dismantle the signal frame, restore the original process context (from before the signal delivery), and atomically unblock any signals that were blocked by sa_mask. This allows the process to seamlessly resume execution from precisely where it was interrupted, often unaware of the kernel’s swift, intricate intervention.

The Ghost of SVR4: The Evolution of Signal Semantics

Early UNIX signals were notoriously unreliable and fraught with race conditions (System V signals being a prime example). Signals could be lost, or handlers could be re-entered unpredictably. The

sigaction()interface, along with the detailed blocking semantics (sa_mask,SA_NODEFER) and thesigreturn()mechanism, were crucial advancements introduced (or standardized by POSIX, which SVR4 embraced) to make signal handling robust and predictable. This provided developers with the necessary tools to write reliable signal-driven applications.Modern Contrast (2026): While

sigaction()remains the preferred and most robust API for signal handling in modern UNIX-like systems (including Linux), the evolution of multi-threading (pthreads) introduced new complexities. Signals are now often delivered to specific threads rather than entire processes, requiring thread-specific signal masks (pthread_sigmask). Despite these advancements, the core mechanisms of signal posting, masking, delivery, and the signal frame concept are directly descended from the foundations laid by systems like SVR4.

Safeguarding the Kernel: Implementation Notes

The SVR4 signal mechanism is fortified with careful considerations for race conditions and system stability:

SIGKILL’s Supremacy: A pendingSIGKILLalways takes precedence, ensuring that a process can be terminated regardless of what other signals it’s handling or blocking. There is no escape fromSIGKILL.- Stop vs. Kill:

SIGKILLcannot be stopped. If aSIGKILLis pending, any attempt to stop the process is overridden, guaranteeing unstoppable termination. - Timely Delivery: The

u.u_sigevpendflag for the current process is a subtle yet vital mechanism. It forces the kernel to check for signals immediately before returning to user mode, ensuring that signals posted even in an interrupt context (e.g., a timer interrupt posting aSIGALRM) are noticed without undue delay. - Queued Signal Information: For real-time signals (which SVR4 did support, though less prevalently than in modern systems) or when extended signal information is requested (

SA_SIGINFO), the kernel maintains detailed queues ofsiginfo_tstructures. Functions likesigdelq()andsigdeq()manage this richer information, allowing the handler to receive not just the signal number, but also why and where the signal occurred.

This careful separation of the asynchronous act of signal posting from the synchronous, controlled act of signal delivery, coupled with robust masking and a well-defined action framework, underpins the reliability of SVR4’s signal architecture.

System Calls

The Kernel’s Gateway: System Calls Interface

In the austere landscape of a protected mode operating system, user-space processes are like diligent workers confined to a meticulously guarded factory floor. They can perform their tasks with gusto, but should they require raw materials from the warehouse (disk I/O), or need to communicate with central management (process creation), they cannot simply wander off. Instead, they must respectfully knock on a very specific door: the System Call Interface. This is the kernel’s tightly controlled, rigorously audited gateway, providing the only legitimate means for a user process to request privileged operations and interact with the very heart of the operating system.

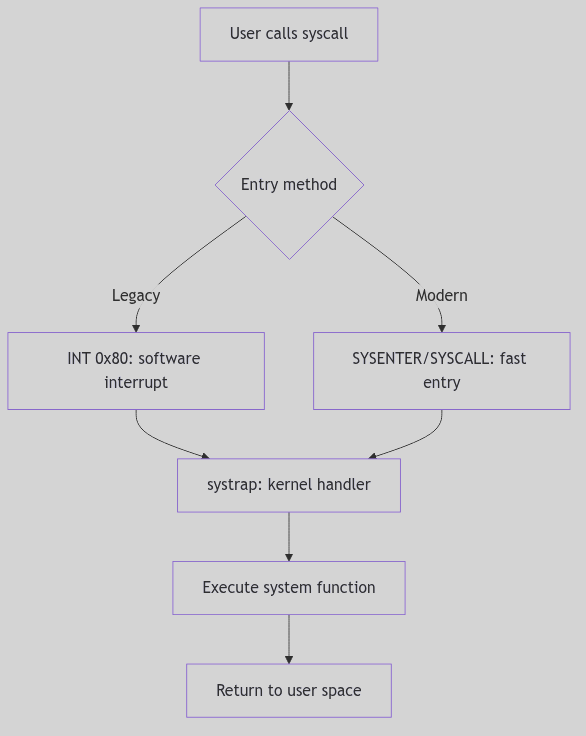

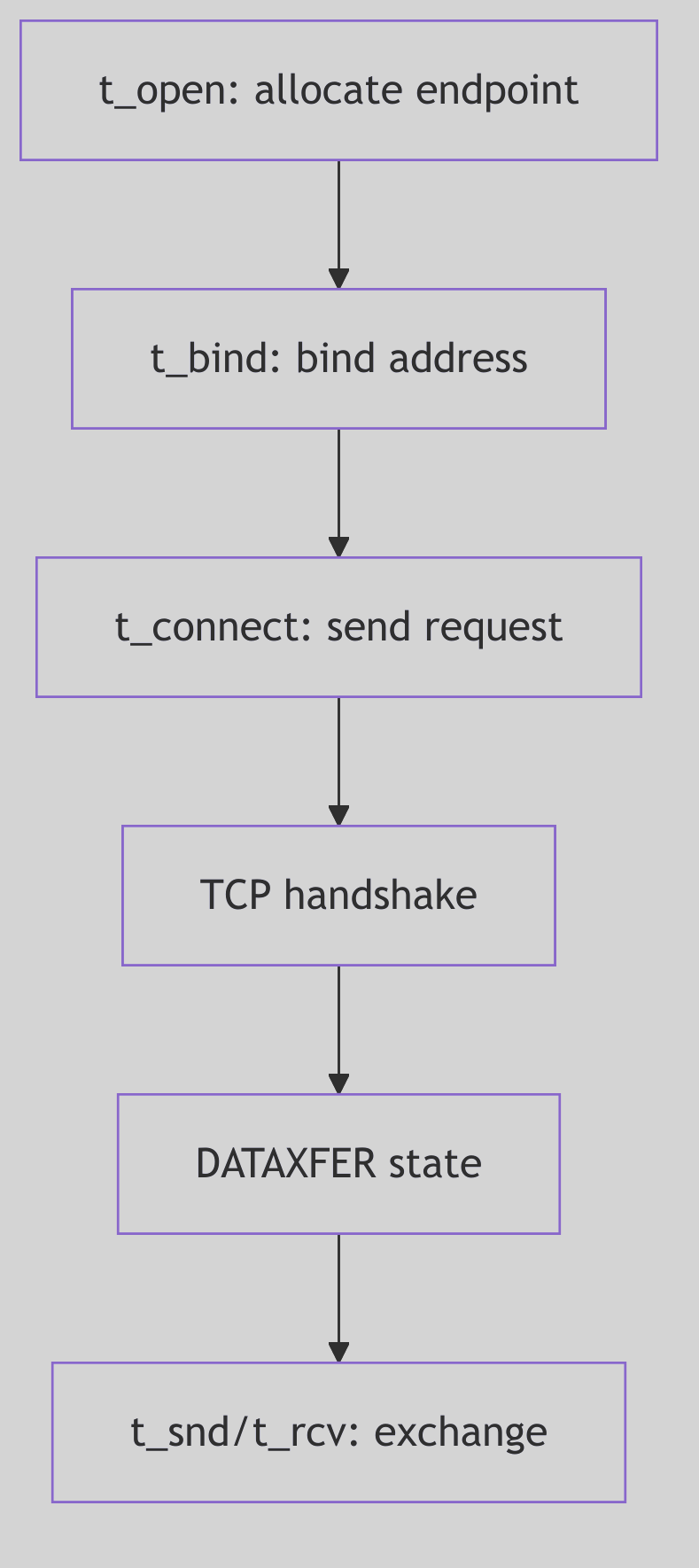

The Knock on the Door: System Call Entry

The journey into the kernel begins with a solemn ritual. When a user program requires a kernel service (e.g., read(), write(), fork()), it doesn’t directly execute kernel code. Instead, it typically calls a wrapper function in the C standard library. This wrapper performs a crucial preparatory step: it loads a unique system call number (an integer identifier for the desired service) into a designated CPU register, usually EAX on the i386 architecture.

Then, with a flourish, the wrapper triggers a software interrupt, specifically INT 0x80 in classic i386 implementations. This INT 0x80 instruction is the magical incantation that causes the CPU to shed its user-mode privileges and elevate itself to the exalted kernel mode. It’s an atomic, hardware-assisted transition designed for security and efficiency.

Modern Evolution: Contemporary x86 processors provide faster system call entry mechanisms:

SYSENTER(Intel) andSYSCALL(AMD), which avoid the overhead of a full interrupt gate transition. These instructions perform a more direct privilege-level switch, bypassing the interrupt descriptor table lookup and offering significantly improved performance for high-frequency system calls. Modern kernels typically support both the legacyINT 0x80path (for compatibility) and the fast entry path.

Upon activation of the entry instruction, control is transferred to the kernel’s dedicated entry point, often a highly optimized assembly routine like systrap. This routine, the vigilant doorman of the kernel, immediately performs several critical tasks:

- Context Preservation: The first and foremost duty is to meticulously save the entire user-mode CPU context (registers, stack pointer, program counter, flags) onto the kernel stack. This snapshot ensures that when the system call completes, the user process can seamlessly resume execution from precisely where it left off, as if the kernel interlude never happened.

- Privilege Check: The kernel verifies that the incoming request is legitimate and that the system call number itself is valid.

- Argument Retrieval: The arguments for the system call, which the user process pushed onto its own stack, must be safely copied from user space to kernel space. This copy is not merely a transfer; it’s a careful validation, ensuring that user-provided pointers do not reference invalid or malicious memory locations within the user’s or, worse, the kernel’s address space.

On i386, the low-level entry stub is in ml/ttrap.s. The system call gate saves registers, clears segment registers, and then calls systrap() in C (ml/ttrap.s:312-348):

sys_call:

subl $8, %esp / pad with dummy ERRCODE, TRAPNO

pusha / save user registers

pushl %ds

pushl %es

pushl %fs

pushl %gs

...

xorw %ax, %ax

movw %ax, %fs

movw %ax, %gs

call kentry_check

sti

movb $0, u+u_sigfault

pushl %esp

call systrap

addl $4, %esp

Code Snippet 1.8a: The systrap Entry Stub (ml/ttrap.s)

Figure 1.4.1: System Call Flow

Figure 1.4.1: System Call Flow

System Calls - Protected Factory

System Calls - Protected Factory

The sysent Table: The Kernel’s Service Directory

Once safely within the kernel, the systrap routine consults the sysent table (defined in sysent.c), which serves as the kernel’s authoritative directory of all available system calls. This table is an array of struct sysent entries, indexed directly by the system call number (the value initially placed in EAX).

Each entry in the sysent table is a compact but vital record:

/* Excerpt from os/sysent.c */

struct sysent sysent[] = {

0, 0, nosys, /* 0 = indir */

1, 0, (int(*)())rexit, /* 1 = exit */

0, 0, fork, /* 2 = fork */

3, SETJUMP|ASYNC|IOSYS, read, /* 3 = read */

3, SETJUMP|ASYNC|IOSYS, write, /* 4 = write */

3, SETJUMP, open, /* 5 = open */

1, SETJUMP, close, /* 6 = close */

0, SETJUMP, wait, /* 7 = wait */

2, SETJUMP, creat, /* 8 = creat */

2, 0, link, /* 9 = link */

1, 0, unlink, /* 10 = unlink */

2, 0, exec, /* 11 = exec */

1, 0, chdir, /* 12 = chdir */

0, 0, gtime, /* 13 = time */

3, 0, mknod, /* 14 = mknod */

2, 0, chmod, /* 15 = chmod */

3, 0, chown, /* 16 = chown */

};

Code Snippet 1.8: The sysent Table (Excerpt)

Here, the first field typically indicates the number of arguments the system call expects, the second might hold flags, and the third is a function pointer to the actual kernel handler responsible for executing the system call’s logic. This design provides an efficient and organized way for the kernel to dispatch control to the correct handler based on the system call number provided by the user process.

The Language of Request: Argument Passing

The kernel’s systrap entry point, having identified the correct system call handler via sysent, now orchestrates the transfer of arguments from the user process to the kernel handler. This is a highly sensitive operation, as the kernel must never implicitly trust data originating from user space.

Arguments are typically pushed onto the user process’s stack before the INT 0x80 instruction. The kernel reads them in systrap() using lfuword() and the per-process argument array (os/trap.c:808-836):

/* Excerpt from systrap() in os/trap.c */

u.u_syscall = r0ptr[EAX];

if ((u.u_syscall & 0xff) >= sysentsize)

u.u_syscall = 0;

scall = u.u_syscall & 0xff;

callp = &sysent[scall];

u.u_sysabort = 0;

{

register u_int *sp = (u_int *)r0ptr[UESP];

register u_int i;

sp++; /* skip return addr */

for (i = 0; i < callp->sy_narg; i++) {

u.u_arg[i] = lfuword((int *)(sp++));

}

}

Code Snippet 1.9: Argument Harvesting in systrap()

This copyin() (and its counterpart copyout() for returning data) is more than just a memory copy; it includes critical validation steps:

- Address Range Check: Does the user-provided address for an argument (e.g., a buffer for

read()) actually fall within the user process’s allocated virtual address space? An attempt to read from or write to arbitrary memory locations (especially kernel space) would be a severe security breach. - Permissions Check: Does the user process have the necessary permissions to access the memory at the given address? For instance,

copyin()ensures read access, whilecopyout()ensures write access. - Size Limits: If a size is specified (e.g., number of bytes to read), the kernel ensures that the operation does not exceed the bounds of the user-provided buffer or other reasonable limits.

Only after these stringent checks are passed are the arguments provided to the system call handler. The handler then executes its core logic, operating within the full privileges and context of the kernel. Results, if any, are typically placed into an rval_t structure (e.g., containing r_val1 and r_val2 for system calls like pipe() that return two values, like a file descriptor pair). This ensures a standardized return mechanism from kernel to user space.

The Ghost of SVR4: The Legacy of INT 0x80

The

INT 0x80mechanism was the standard, and often the only, way to enter the kernel on i386 systems in the SVR4 era. It was robust, well-understood, and provided the necessary protection boundary. However, each software interrupt involves significant overhead due to the saving and restoring of context and the transition through the interrupt descriptor table.Modern Contrast (2026): Modern x86 processors (and other architectures) feature specialized, faster instructions for system call entry, such as

SYSENTER/SYSEXIT(Intel) andSYSCALL/SYSRET(AMD). These instructions offer a more streamlined, hardware-optimized path directly into the kernel, bypassing some of the interrupt overhead. WhileINT 0x80may still be supported for compatibility, high-performance applications and modern operating systems prefer these dedicated fast system call mechanisms, a testament to the continuous pursuit of efficiency in the face of ever-increasing system call frequencies.

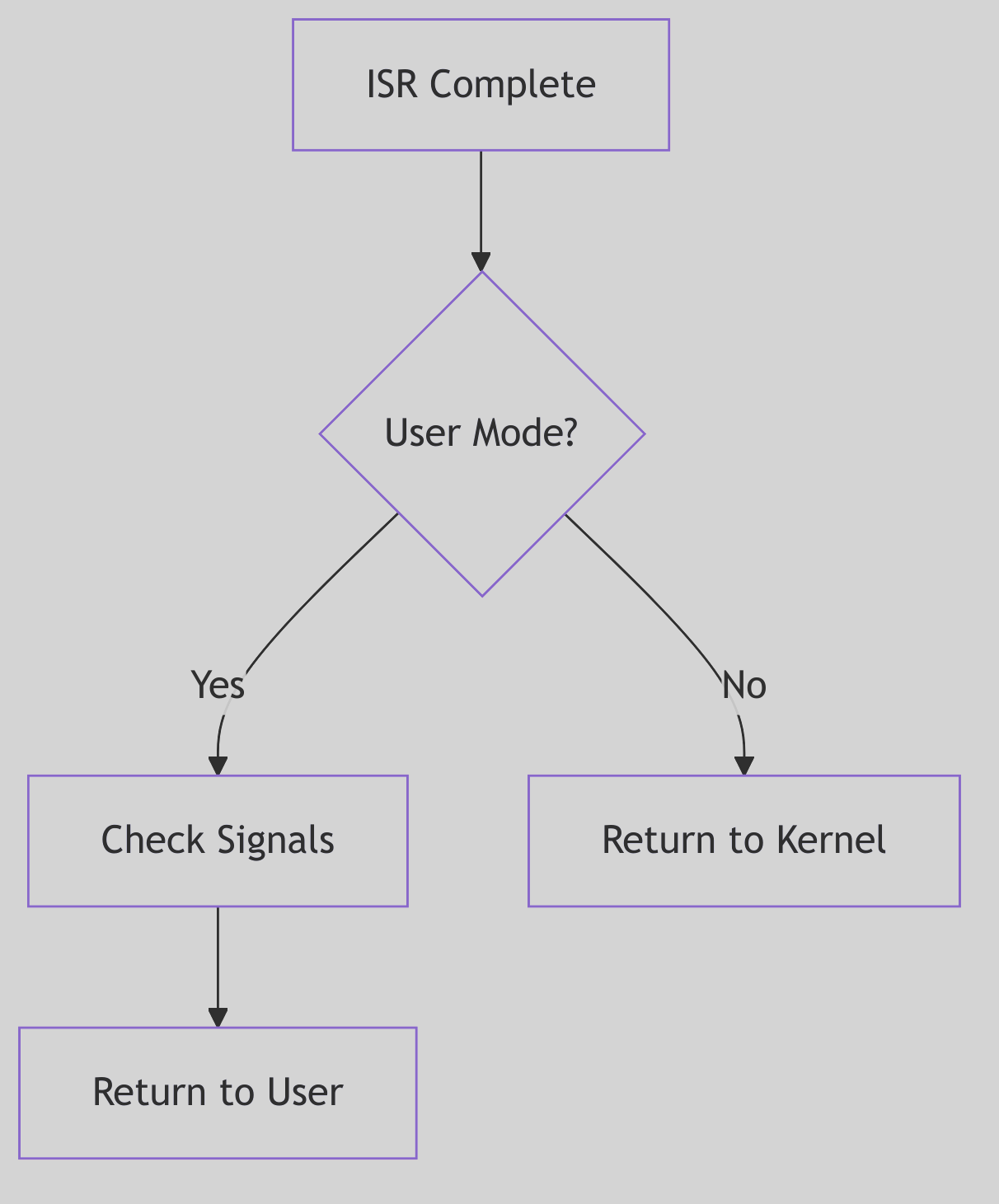

The Return Journey: System Call Exit

Having successfully navigated the kernel’s labyrinth, performed its sacred duties, and perhaps even transformed the system state, the system call handler must now orchestrate a graceful exit. The journey back from the privileged kernel mode to the constrained user mode is not a mere reversal of entry but a carefully choreographed sequence of checks and context restorations. The systrap_rtn routine, the counterpart to systrap, assumes command for this delicate phase.

Before the CPU can shed its kernel-mode attire and resume user-mode execution, systrap_rtn performs two critical checks:

-

Signal Interception (

issig()): The very first order of business is to invokeissig(), our familiar signal gatekeeper from Section 1.3. This check is paramount: if any signals have been posted to the process while it was executing in kernel mode,issig()will identify the highest-priority deliverable signal. If such a signal is found, the kernel will not return directly to the user’s interrupted instruction. Instead, it will divert control to the signal delivery mechanism (psig()), potentially invoking a user-defined signal handler or executing the signal’s default action (e.g., terminating the process). This ensures that asynchronous events are processed promptly and that a process cannot indefinitely defer signal handling by remaining in kernel mode. -

Preemption Check (

runrunflag): Next,systrap_rtninspects therunrunflag. This humble-looking flag is the scheduler’s silent decree, a one-bit memo indicating that a preemption event is pending—a higher-priority process is now runnable, or the current process’s time slice has expired. Ifrunrunis set, the kernel will not return to the current user process. Instead, it triggers a context switch (preempt()), handing control back to the scheduler to select the next deserving process. This ensures that the kernel maintains its responsiveness and fairness, even when a process has just completed a system call.

Only if both these checks are cleared—no deliverable signals and no pending preemption—does the kernel proceed to restore the user-mode CPU context that was so carefully preserved during system call entry. The saved registers, stack pointer, and program counter are meticulously reloaded, and finally, a special instruction (typically IRET on i386 for interrupt returns) is executed. This IRET instruction atomically restores the CPU to user mode and returns control to the precise instruction in the user program that was interrupted by the INT 0x80.

The Echo of the Kernel: Return Values

The outcome of a system call needs to be communicated back to the requesting user process. In SVR4, the results are typically passed back via general-purpose registers. Successful system calls return a non-negative value (often 0, or a specific result like a file descriptor or byte count) in EAX.

In the event of an error, the system call typically returns a value of -1 in EAX, and sets the CPU’s carry flag. This carry flag is a subtle but critical indicator. The C library wrapper, which initiated the INT 0x80 call, then checks this carry flag. If set, it interprets the value in EAX as an error code (a negative errno value), translates it into the appropriate positive errno value (e.g., EFAULT, EPERM, ENOENT), and stores it in the global errno variable, making it accessible to the user program.

This meticulous dance of entry, execution, and exit, punctuated by critical checks for signals and preemption, forms the very bedrock of the SVR4 kernel’s interaction with user applications, safeguarding system integrity while providing essential services.

Process Groups and Sessions: The Theater, the Cast, and the Stage Manager

Picture a grand theater with a single stage. Actors are grouped into casts, each cast rehearsing a scene. The stage manager must know which cast is on stage and which is waiting in the wings. When the curtain rises, the stage belongs to one cast only; the others must stay silent or be ushered off.

SVR4’s process groups and sessions are that theater. A process group is a cast. A session is the production. The controlling terminal is the stage, and job control is the stage manager’s whistle.

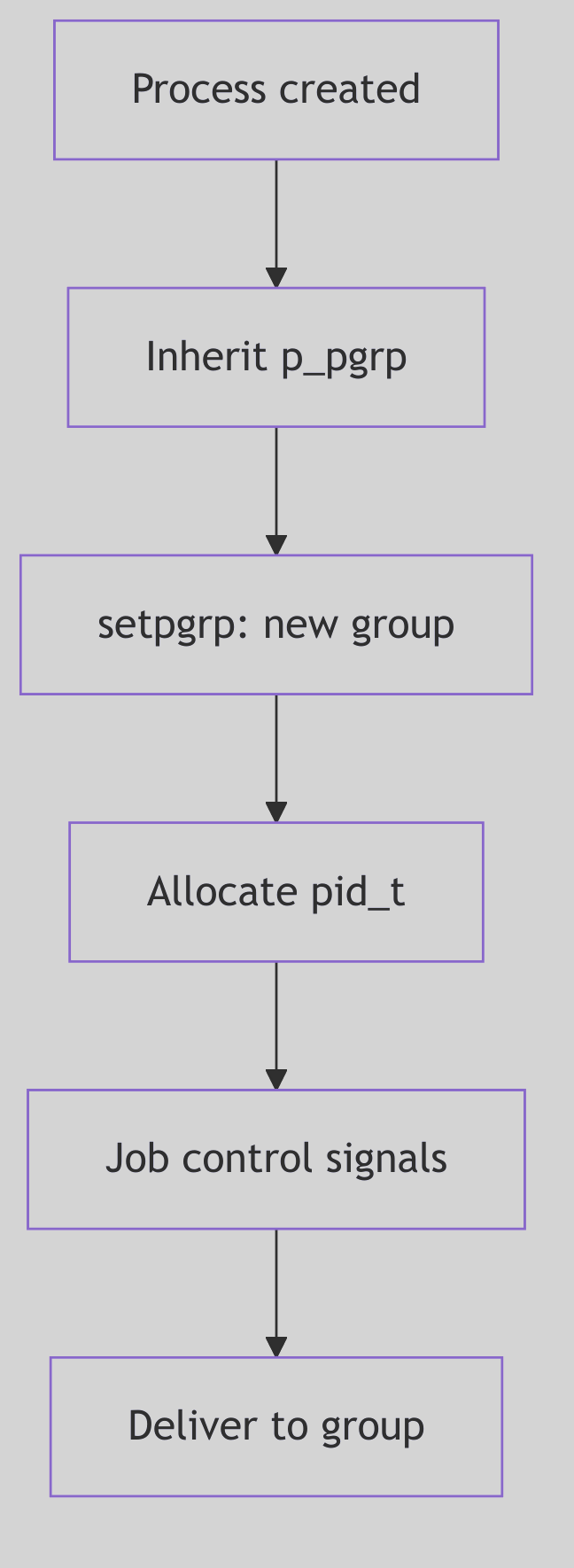

The Cast List: Process Groups

Process groups are represented by struct pid entries that link members together. When a process joins a group, pgjoin() links it into the group list and updates group metadata (os/pgrp.c:79-101).

void

pgjoin(p, pgp)

register proc_t *p;

register struct pid *pgp;

{

p->p_pglink = pgp->pid_pglink;

pgp->pid_pglink = p;

p->p_pgidp = pgp;

if (pgp->pid_id <= SHRT_MAX)

p->p_opgrp = (o_pid_t)pgp->pid_id;

else

p->p_opgrp = (o_pid_t)NOPID;

if (p->p_pglink == NULL) {

PID_HOLD(pgp);

if (pglinked(p))

pgp->pid_pgorphaned = 0;

else

pgp->pid_pgorphaned = 1;

} else if (pgp->pid_pgorphaned && pglinked(p))

pgp->pid_pgorphaned = 0;

}

The Casting Call (os/pgrp.c:79-101)

Each group keeps a linked list of members via pid_pglink. The pid_pgorphaned flag tracks whether the group has become orphaned, which affects job-control signals.

Figure 1.5.1: Processes Linked into a Group

Figure 1.5.1: Processes Linked into a Group

Process Groups - Military Regiment

Process Groups - Military Regiment

Signaling the Cast: pgsignal()

When the kernel needs to signal a whole group, it walks the group list and delivers the signal to each member (os/pgrp.c:65-72).

for (prp = pidp->pid_pglink; prp; prp = prp->p_pglink)

psignal(prp, sig);

The Stage Manager’s Whistle (os/pgrp.c:69-72)

This is how kill(-pgid, SIGTERM) fans out to every process in the group, and how job control can suspend or continue a whole cast at once.

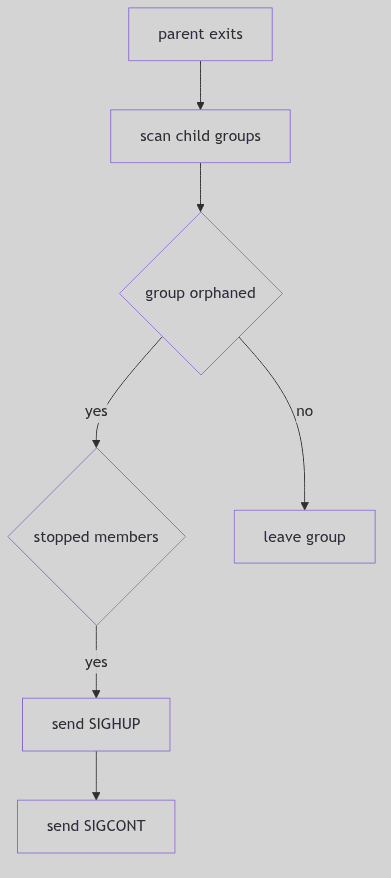

Orphaned Groups and Job Control

When a parent exits, it may orphan a child’s process group. pgdetach() checks whether any remaining parent outside the group can still influence it. If not, and if any member is stopped, the kernel sends SIGHUP and SIGCONT to the group (os/pgrp.c:144-170). The stage manager clears the scene so no stopped cast remains abandoned.

This is the mechanism behind the classic shell behavior: background jobs are terminated or resumed when their controlling shell exits.

Figure 1.5.2: Orphan Detection and Group Signaling

Figure 1.5.2: Orphan Detection and Group Signaling

The Production: Sessions

A session groups process groups and anchors the controlling terminal. The sess_t structure in sys/session.h records the session ID, controlling terminal, and reference count (sys/session.h:14-26).

typedef struct sess {

short s_ref; /* reference count */

short s_mode; /* /sess current permissions */

uid_t s_uid; /* /sess current user ID */

gid_t s_gid; /* /sess current group ID */

ulong s_ctime; /* /sess change time */

dev_t s_dev; /* tty's device number */

struct vnode *s_vp; /* tty's vnode */

struct pid *s_sidp; /* session ID info */

struct cred *s_cred; /* allocation credentials */

} sess_t;

The Production Ledger (sys/session.h:14-25)

Creating a new session via setsid() ultimately calls sess_create(), which detaches the process from its current group, allocates a new session, and makes the process its own process group leader (os/session.c:57-77).

pp = u.u_procp;

pgexit(pp);

SESS_RELE(pp->p_sessp);

sp = (sess_t *)kmem_zalloc(sizeof (sess_t), KM_SLEEP);

sp->s_sidp = pp->p_pidp;

sp->s_ref = 1;

sp->s_dev = NODEV;

pp->p_sessp = sp;

u.u_ttyp = NULL; /* compatibility */

pgjoin(pp, pp->p_pidp);

PID_HOLD(sp->s_sidp);

The New Production (os/session.c:57-79, excerpt)

Figure 1.5.3:

Figure 1.5.3: setsid() and Session Formation

The Stage: Controlling Terminals

The controlling terminal belongs to a session. Foreground process groups may read and write freely; background groups are disciplined by signals. When a session loses its terminal, freectty() sends SIGHUP and clears the association (os/session.c:80-103). This is the stage manager striking the set at the end of the show.

The job-control signals SIGTTIN and SIGTTOU enforce the rule that only the foreground cast uses the stage. Background actors may speak, but the manager will suspend them if they interrupt the play.

The Ghost of SVR4: We organized casts and productions so that terminals could be governed without chaos. Modern shells still use the same model, though virtual terminals, containers, and session leaders have multiplied. The stage is now shared across many theaters, yet the rule remains: one foreground cast at a time.

The Curtain Falls

Process groups and sessions are the social order of the kernel. They tell the system who belongs together, who owns the stage, and who must be silenced when the curtain rises. The theater runs smoothly because the cast list is precise and the stage manager never forgets who is in charge.

PID Management: The Registry of Names

A city cannot function without a registry of names. Each citizen is assigned a number, recorded in a ledger, and removed only after their affairs are settled. If a number is reused too soon, confusion follows. The registry clerk must be careful and methodical.

SVR4’s PID management is that registry. It allocates process IDs, maintains a hash for fast lookup, and keeps reference counts so a PID is not recycled while it is still referenced by process groups or sessions.

The Ledger Entry: struct pid

PIDs are represented by struct pid entries stored in a hash table. In os/pid.c the kernel keeps a pidhash array and helper macros (os/pid.c:51-55).

#define HASHSZ 64

#define HASHPID(pid) (pidhash[((pid)&(HASHSZ-1))])

The Registry Buckets (os/pid.c:51-53)

Each pid entry links to a process group list and tracks reference counts and the /proc slot (os/pid.c:41-49, 167-175). The details are scattered in proc_t and the pid structure, but the principle is clear: the pid entry is the registry’s card for that identity.

Figure 1.6.1: PID Allocation and Lookup

Figure 1.6.1: PID Allocation and Lookup

PID Management - Registrar’s Office

PID Management - Registrar’s Office

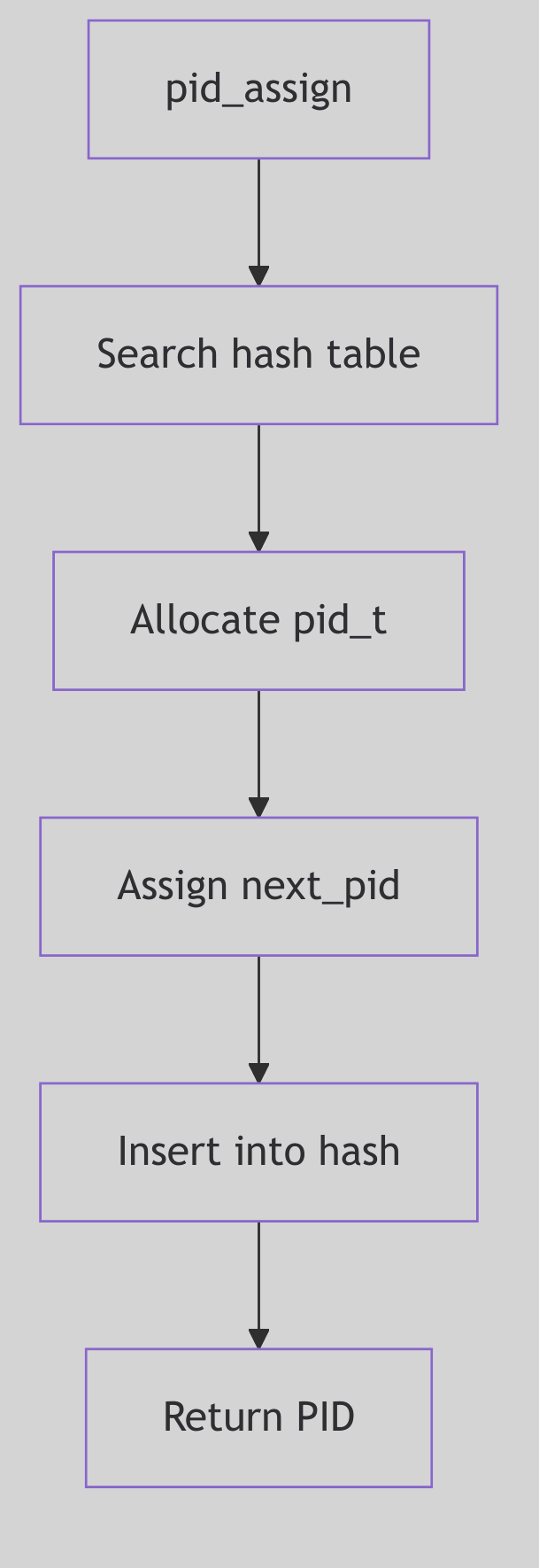

Assigning a New Name: pid_assign()

pid_assign() handles PID allocation at fork time (os/pid.c:96-182). It enforces process limits, allocates a proc_t and pid structure, and then searches for the next free PID.

if (nproc >= v.v_proc - 1) {

if (nproc == v.v_proc) {

syserr.procovf++;

return -1;

}

if (cond & NP_NOLAST)

return -1;

}

The Capacity Check (os/pid.c:111-118)

PID selection increments mpid, wraps at MAXPID, and skips any PID already present in the hash (os/pid.c:152-155). Once a free PID is found, it is inserted into the hash and linked to the new proc_t.

do {

if (++mpid == MAXPID)

mpid = minpid;

} while (pid_lookup(mpid) != NULL);

The Next Free Name (os/pid.c:152-155)

Figure 1.6.2:

Figure 1.6.2: pid_assign() Allocation Path

Reference Counts and Exit

PIDs are shared by process groups and sessions. The registry uses reference counts to keep an ID alive while any structure still points to it. PID_HOLD increments the count; PID_RELE and pid_rele() remove the entry from the hash when the count reaches zero (os/pid.c:188-206).

When a process exits, pid_exit() removes it from the active list, releases its process group and session references, and decrements the PID entry (os/pid.c:212-241). Only after these steps does the PID become available for reuse.

Figure 1.6.3:

Figure 1.6.3: pid_exit() and Release

Lookup and Group Find

prfind() locates a process by PID via the hash and checks whether the slot is active (os/pid.c:248-257). pgfind() does the same for process groups, returning the group’s linked list (os/pid.c:265-276). These lookups are fundamental to signaling, job control, and /proc traversal.

The low-level hash walk is handled by pid_lookup(), which scans a bucket for a matching pid_id (os/pid.c:61-72).

for (pidp = HASHPID(pid); pidp; pidp = pidp->pid_link) {

if (pidp->pid_id == pid) {

ASSERT(pidp->pid_ref > 0);

break;

}

}

The Registry Lookup (os/pid.c:65-71)

This tight loop is what makes signals and /proc queries fast. The registry is only as useful as its lookup speed.

Minimum PID and /proc Slots

The allocator maintains a moving floor for PID reuse. pid_setmin() advances minpid to mpid + 1 (os/pid.c:75-79), ensuring that recently used PIDs are not immediately recycled. This reduces the chance of PID reuse races in parent/child bookkeeping.

The code also reserves a /proc directory slot for each process. A procent entry is pulled from the free list, linked to the new proc_t, and later returned in pid_exit() (os/pid.c:158-166, 223-227). The registry is thus tied to the /proc filesystem: every PID has a directory entry while it is active.

These two mechanisms keep the ledger consistent: names are not reused too quickly, and visibility in /proc stays in lockstep with process lifetime.

The Ghost of SVR4: We kept a modest hash table and a monotonic counter for IDs. Modern kernels use PID namespaces, per-container ranges, and larger ID spaces, but the registry rule is the same: never reuse a name while someone still holds a claim to it.

The Ledger Closes

PID management is a registry of identities. It assigns numbers, tracks them in a hash, and retires them only when all references are gone. The clerk’s ledger ensures that every process has a unique name and that no name is reused too soon.

Credentials and Access Control: The Seals, the Ledger, and the Wax

Imagine a courthouse where every petition bears a wax seal. The seal is not the person, but it carries the person’s authority. Clerks do not know the petitioner; they only read the seal. If a seal changes, the clerk must ensure no other parchment was stamped with the old impression.

SVR4’s credentials are those seals. The cred_t structure encodes user IDs and groups, and the kernel shares and duplicates these seals with strict reference counts to avoid accidental authority leaks.

The Seal: struct cred

The credential structure is defined in sys/cred.h (sys/cred.h:20-30). It holds effective, real, and saved IDs, as well as supplementary groups.

typedef struct cred {

ushort cr_ref; /* reference count */

ushort cr_ngroups; /* number of groups in cr_groups */

uid_t cr_uid; /* effective user id */

gid_t cr_gid; /* effective group id */

uid_t cr_ruid; /* real user id */

gid_t cr_rgid; /* real group id */

uid_t cr_suid; /* saved user id */

gid_t cr_sgid; /* saved group id */

gid_t cr_groups[1];/* supplementary group list */

} cred_t;

The Seal Structure (sys/cred.h:20-29)

Two distinctions matter:

- Effective IDs (

cr_uid,cr_gid) decide access checks. - Real/saved IDs (

cr_ruid,cr_suid) preserve the original identity and allow privilege drops and restorations.

Figure 1.7.1: Credentials Shared Across Processes

Figure 1.7.1: Credentials Shared Across Processes

Credentials - Royal Seals

Credentials - Royal Seals

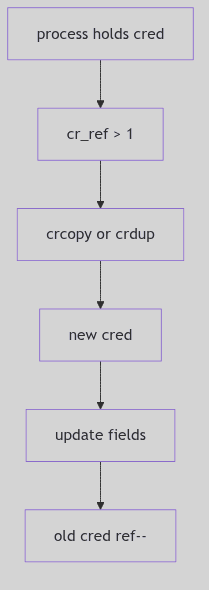

Reference Counts and Copy-on-Write

Credentials are shared to reduce memory churn. When a process needs to modify its credentials, it must first obtain a private copy. The core routines live in os/cred.c.

struct cred *

crget()

{

if (crfreelist) {

cr = &crfreelist->cl_cred;

crfreelist = ((struct credlist *)cr)->cl_next;

} else

cr = (struct cred *)kmem_alloc(crsize, KM_SLEEP);

struct_zero((caddr_t)cr, sizeof(*cr));

crhold(cr);

return cr;

}

The Blank Seal (os/cred.c:92-109, abridged)

crdup() and crcopy() both duplicate the seal, but they differ in how they treat the original:

crdup()creates a new copy and leaves the old intact (os/cred.c:153-162).crcopy()duplicates and then frees the old one (os/cred.c:137-147).

Reference counts are decremented in crfree(); when the count reaches zero the seal is returned to the freelist (os/cred.c:118-129). The courthouse reuses its wax only when no parchments remain.

Figure 1.7.2: Copy-on-Write and Reference Counting

Figure 1.7.2: Copy-on-Write and Reference Counting

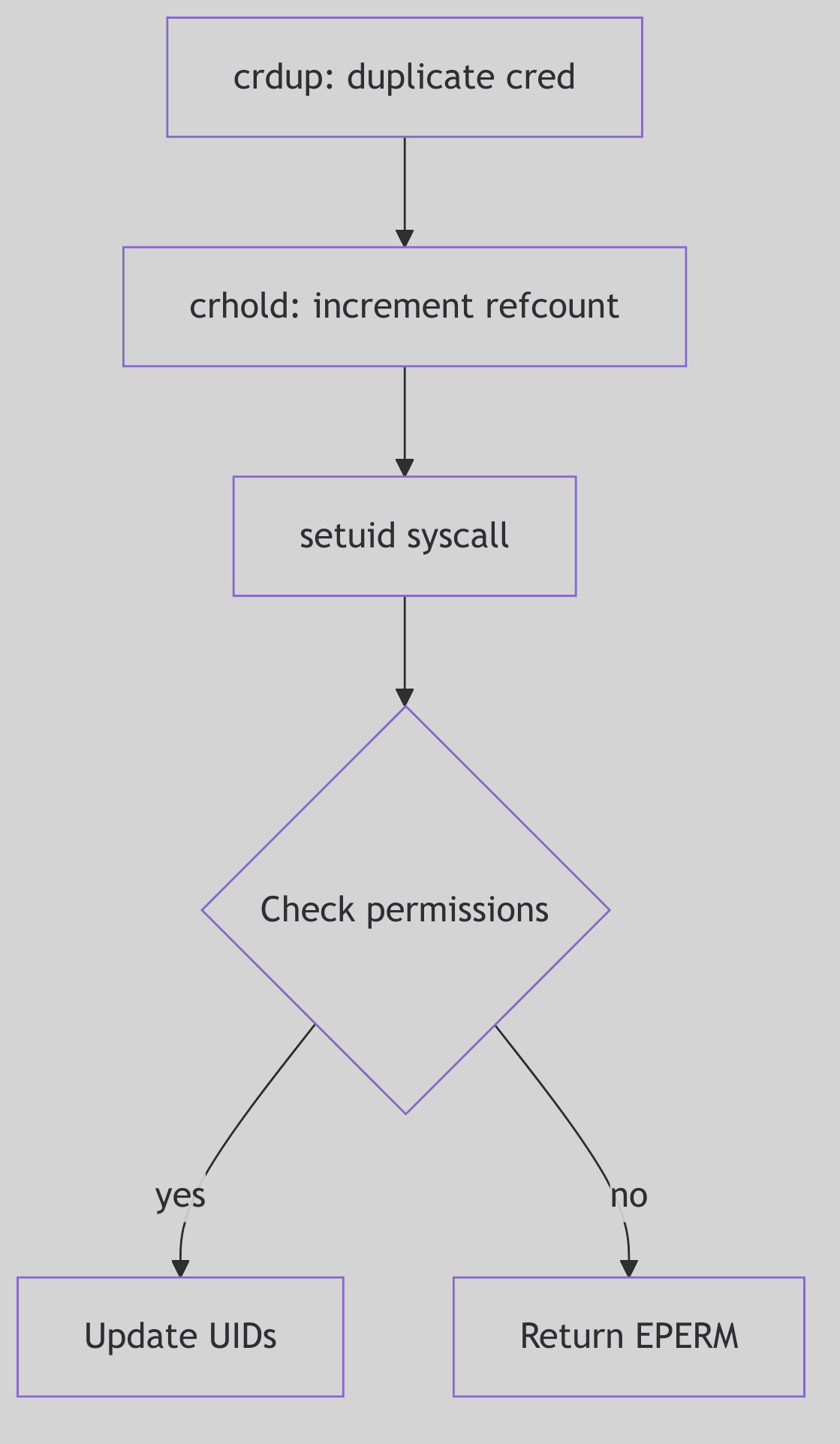

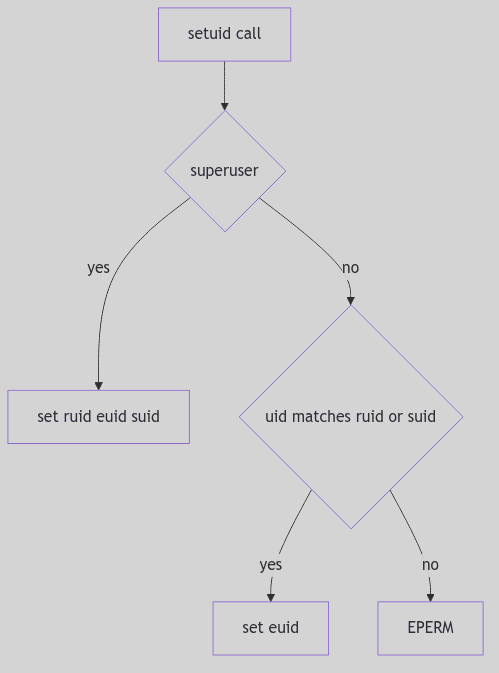

The Setuid Ritual

setuid() is the classic credential-changing system call. SVR4 enforces the rules in os/scalls.c (os/scalls.c:221-264): a non-root process may only switch its effective UID to its real or saved UID, while a superuser may set all three.

if (u.u_cred->cr_uid

&& (uid == u.u_cred->cr_ruid || uid == u.u_cred->cr_suid)) {

u.u_cred = crcopy(u.u_cred);

u.u_cred->cr_uid = uid;

} else if (suser(u.u_cred)) {

u.u_cred = crcopy(u.u_cred);

u.u_cred->cr_uid = uid;

u.u_cred->cr_ruid = uid;

u.u_cred->cr_suid = uid;

} else

error = EPERM;

The Setuid Decision (os/scalls.c:244-264, abridged)

The saved UID is the lockbox: a setuid program can drop privileges to the real UID and later regain them by restoring the saved UID, all within the rules of the seal.

Figure 1.7.3: Switching Identities with

Figure 1.7.3: Switching Identities with setuid()

Superuser Recognition

The suser() helper is a simple test with an important side effect: if the effective UID is zero, the kernel sets an accounting flag and returns success (os/cred.c:181-188). This is the courthouse clerk noting that a royal seal was presented.

The Ghost of SVR4: Our seals were simple: IDs and groups, reference counted and shared. Modern systems have capabilities, namespaces, and per-thread credentials, but the same rule remains. Authority must be explicit, copied when it changes, and never leaked across unrelated processes.

The Seal Is Set

Credentials are the kernel’s identity ledger. They are shared, copied with care, and enforced on every privileged action. The wax is not the person, but the courthouse will only honor the seal.

Messages: The Town Courier and the Ledger of Pigeons

Imagine a bustling town with a central courier office. Citizens drop sealed notes into labeled pigeonholes, each hole marked by a number and a type. Couriers arrive to collect only the type they expect. The clerk does not read the letters; she keeps a ledger, counts the paper, and ensures the pigeonholes never overflow.

SVR4’s System V message queues are that courier office. Messages are typed, queued, and delivered asynchronously. The kernel keeps a ledger for each queue, a free list of message headers, and a shared message pool that holds the actual text.

The Queue Ledger: struct msqid_ds

The message queue descriptor in sys/msg.h tracks permissions, queue pointers, and accounting (sys/msg.h:56-71).

struct msqid_ds {

struct ipc_perm msg_perm; /* operation permission struct */

struct msg *msg_first; /* ptr to first message on q */

struct msg *msg_last; /* ptr to last message on q */

ulong msg_cbytes; /* current # bytes on q */

ulong msg_qnum; /* # of messages on q */

ulong msg_qbytes; /* max # of bytes on q */

pid_t msg_lspid; /* pid of last msgsnd */

pid_t msg_lrpid; /* pid of last msgrcv */

time_t msg_stime; /* last msgsnd time */

long msg_pad1; /* reserved for time_t expansion */

time_t msg_rtime; /* last msgrcv time */

long msg_pad2; /* time_t expansion */

time_t msg_ctime; /* last change time */

long msg_pad3; /* time expansion */

long msg_pad4[4]; /* reserve area */

};

The Courier Ledger (sys/msg.h:56-72)

Two fields govern flow:

msg_cbytescounts the current bytes in the queue.msg_qbytessets the maximum, enforcing backpressure.

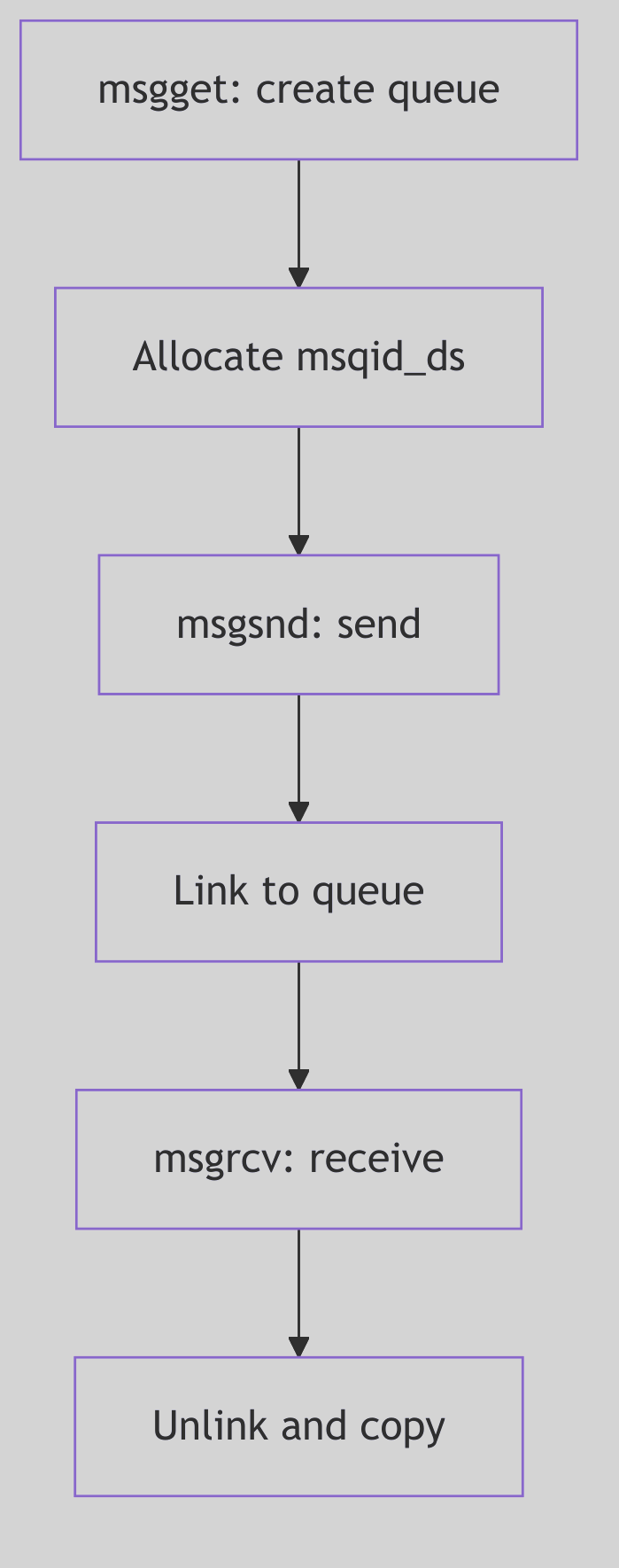

Figure 1.8.1: Queue Ledger and Message Links

Figure 1.8.1: Queue Ledger and Message Links

Messages IPC - Post Box

Messages IPC - Post Box

The Message Header: struct msg

Each enqueued message has a header that links it into the queue and points into the shared message pool (sys/msg.h:136-141).

struct msg {

struct msg *msg_next; /* ptr to next message on q */

long msg_type; /* message type */

ushort msg_ts; /* message text size */

short msg_spot; /* message text map address */

};

The Pigeon Tag (sys/msg.h:136-141)

The msg_spot field is the index into the message pool, allocated from a resource map. This is why the kernel keeps a free list of headers (msgfp) and a separate message text map (msgmap).

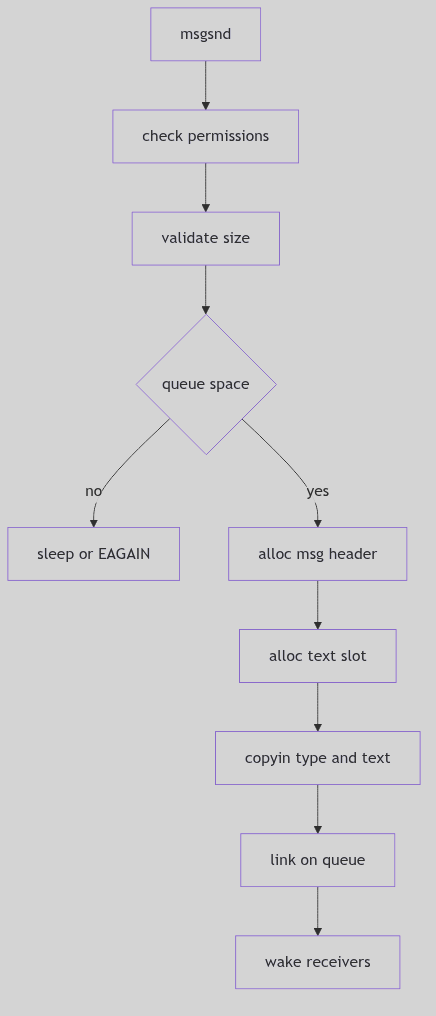

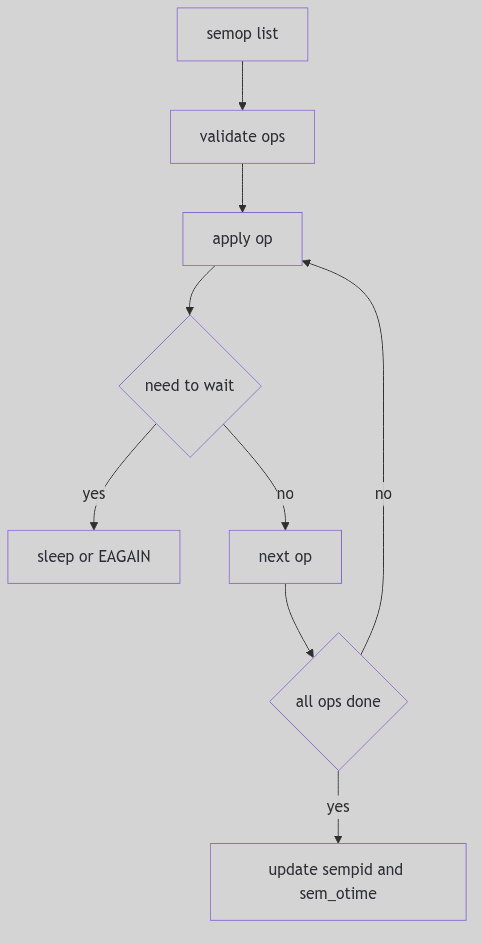

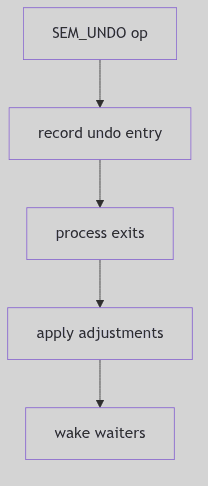

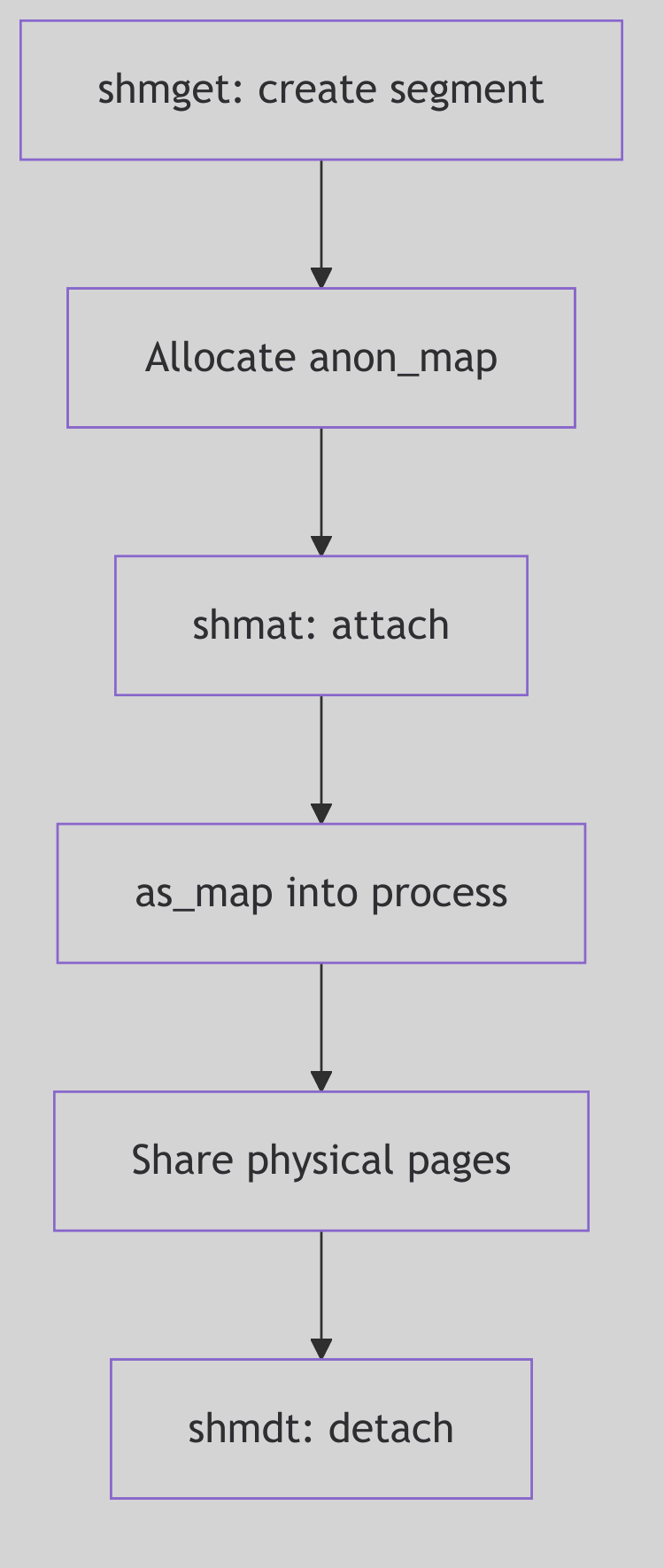

Sending a Message: msgsnd()

The send path in os/msg.c checks permissions, validates size, waits for space, and then copies the message into the pool (os/msg.c:498-648).

Key steps:

- Permission check via

ipcaccess. - Validate size against

msginfo.msgmax. - Block or fail if

msg_cbytes + msgsz > msg_qbytes. - Allocate header and text spot from

msgfpandmsgmap. - Copy in type and text, update queue counters.

if (cnt + qp->msg_cbytes > (uint)qp->msg_qbytes) {

if (uap->msgflg & IPC_NOWAIT) {

error = EAGAIN;

goto msgsnd_out;

}

qp->msg_perm.mode |= MSG_WWAIT;

if (sleep((caddr_t)qp, PMSG|PCATCH))

return EINTR;

goto getres;

}

The Queue Full Decision (os/msg.c:544-567)

If receivers are waiting, msgsnd() clears MSG_RWAIT and wakes them once the new message lands (os/msg.c:641-645). The clerk rings the bell when a pigeon arrives.

Figure 1.8.2:

Figure 1.8.2: msgsnd() Allocation and Enqueue

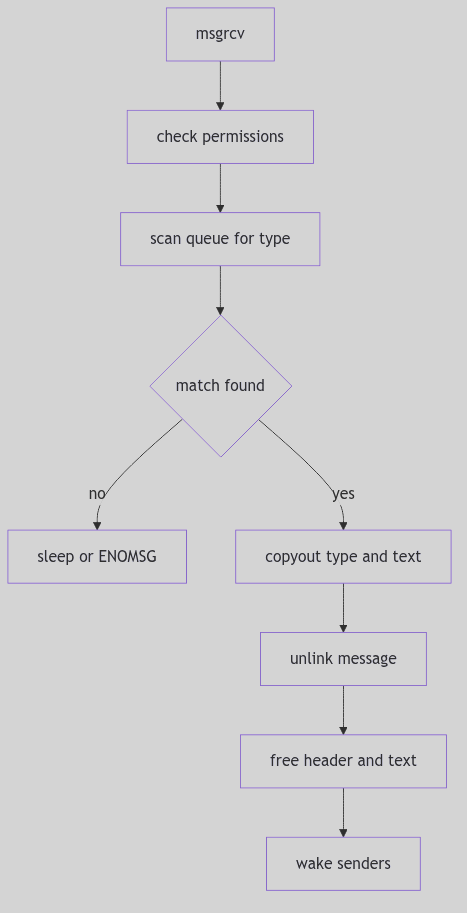

Receiving a Message: msgrcv()

The receive path walks the queue, matching by type. It handles three cases (os/msg.c:429-447):

msgtyp == 0: take the first message.msgtyp > 0: take the first message with that exact type.msgtyp < 0: take the message with the lowest type that is <=-msgtyp.

pmp = NULL;

mp = qp->msg_first;

if (uap->msgtyp == 0)

smp = mp;

else

for (; mp; pmp = mp, mp = mp->msg_next) {

if (uap->msgtyp > 0) {

if (uap->msgtyp != mp->msg_type)

continue;

smp = mp;

spmp = pmp;

break;

}

if (mp->msg_type <= -uap->msgtyp) {

if (smp && smp->msg_type <= mp->msg_type)

continue;

smp = mp;

spmp = pmp;

}

}

if (smp) {

if ((unsigned)uap->msgsz < smp->msg_ts) {

if (!(uap->msgflg & MSG_NOERROR)) {

error = E2BIG;

goto msgrcv_out;

} else

sz = uap->msgsz;

} else

sz = smp->msg_ts;

if (copyout((caddr_t)&smp->msg_type, (caddr_t)uap->msgp,

sizeof(smp->msg_type))) {

error = EFAULT;

goto msgrcv_out;

}

if (sz

&& copyout((caddr_t)(msg + msginfo.msgssz * smp->msg_spot),

(caddr_t)uap->msgp + sizeof(smp->msg_type), sz)) {

error = EFAULT;

goto msgrcv_out;

}

rvp->r_val1 = sz;

qp->msg_cbytes -= smp->msg_ts;

qp->msg_lrpid = u.u_procp->p_pid;

qp->msg_rtime = hrestime.tv_sec;

wflag = NOPRMPT;

msgfree(qp, spmp, smp, NOPRMPT);

goto msgrcv_out;

}

if (uap->msgflg & IPC_NOWAIT) {

error = ENOMSG;

goto msgrcv_out;

}

qp->msg_perm.mode |= MSG_RWAIT;

*lockp = 0;

wakeprocs(lockp, wflag);

if (sleep((caddr_t)&qp->msg_qnum, PMSG|PCATCH))

return EINTR;

goto findmsg;

The Type Selection Walk (os/msg.c:419-476, excerpt)